What Makes Us Unique in the Age of AI

Abstract

A vital perspective is missing from the discussions we’re having about Artificial Intelligence: what does it mean for our identity?

Our fascination with AI stems from the perceived uniqueness of human intelligence. We believe it’s what differentiates us. Fears of AI not only concern how it invades our digital lives, but also the implied threat of an intelligence that displaces us from our position at the centre of the world.

Atomism, proposed by Democritus, suggested it was impossible to continue dividing matter down into ever smaller components: eventually we reach a point where a cut cannot be made (the Greek for uncuttable is ‘atom’). In the same way, by slicing away at the facets of human intelligence that can be replaced by machines, AI uncovers what is left: an indivisible core that is the essence of humanity.

By contrasting our own (evolved, locked-in, embodied) intelligence with the capabilities of machine intelligence through history, The Atomic Human reveals the technical origins, capabilities and limitations of AI systems, and how they should be wielded. Not just by the experts, but ordinary people.

We are undergoing a cognitive revolution tha is akin to the cosmological revolution Nicolaus Copernicus triggered in 1543. But just like the Earth became no less interesting because it isn’t at the centre of the cosmological universe, our intelligence is no less interesting because it doesn’t dominate the cognitive universe.

The thinking in this talk comes from Neil’s forthcoming book to be published with Allen Lane in June 2024. The main premise is that AI represents a cogntive revolution akin to the Copernican celestial revolution. But as we realise that we are not at the centre of the cognitive universe, we can understand better who we are and what is precious about us.

Copernican Revolution

In the 16th century Europe underwent a paradigm shift in thinking about how we are located in our Universe. Formerly, the Earth was seen as being at the centre of the Universe, the heart of God’s creation. In a form of psychological bias that is a combination of observer bias and self-serving bias, humans believed that we were the objective.

The Copernican revolution displaced the geocentric view of the Universe. But what we had left was our intelligence. It was clear that this differentiated us from other animals and plants. Surely this relocated us at the heart of creation? Today we are undergoing a new Copernican revolution. The same self-serving and observer biases had come to the fore. What we are seeing in AI is the realisation that we are not even cognitively at the centre of the Universe.

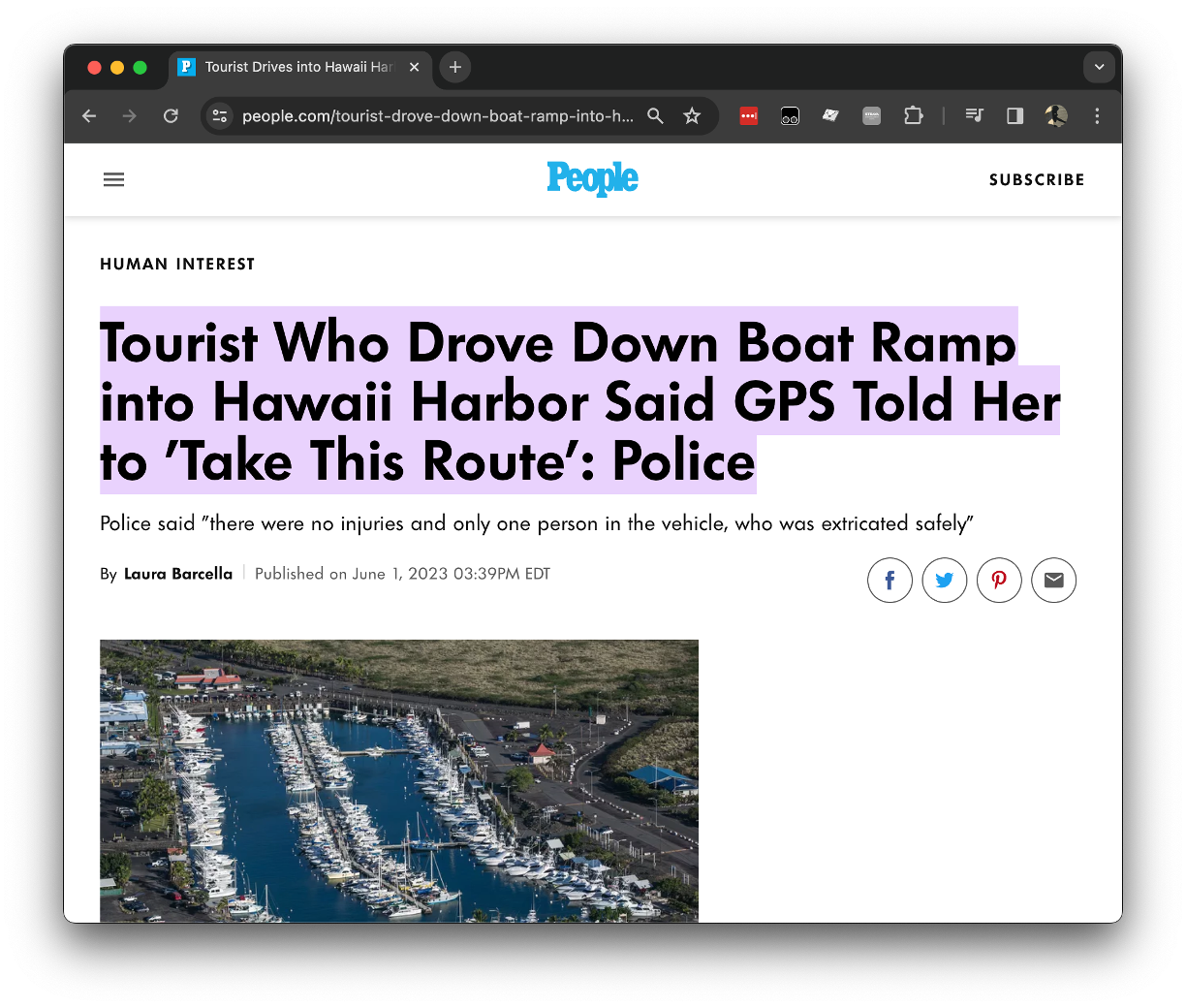

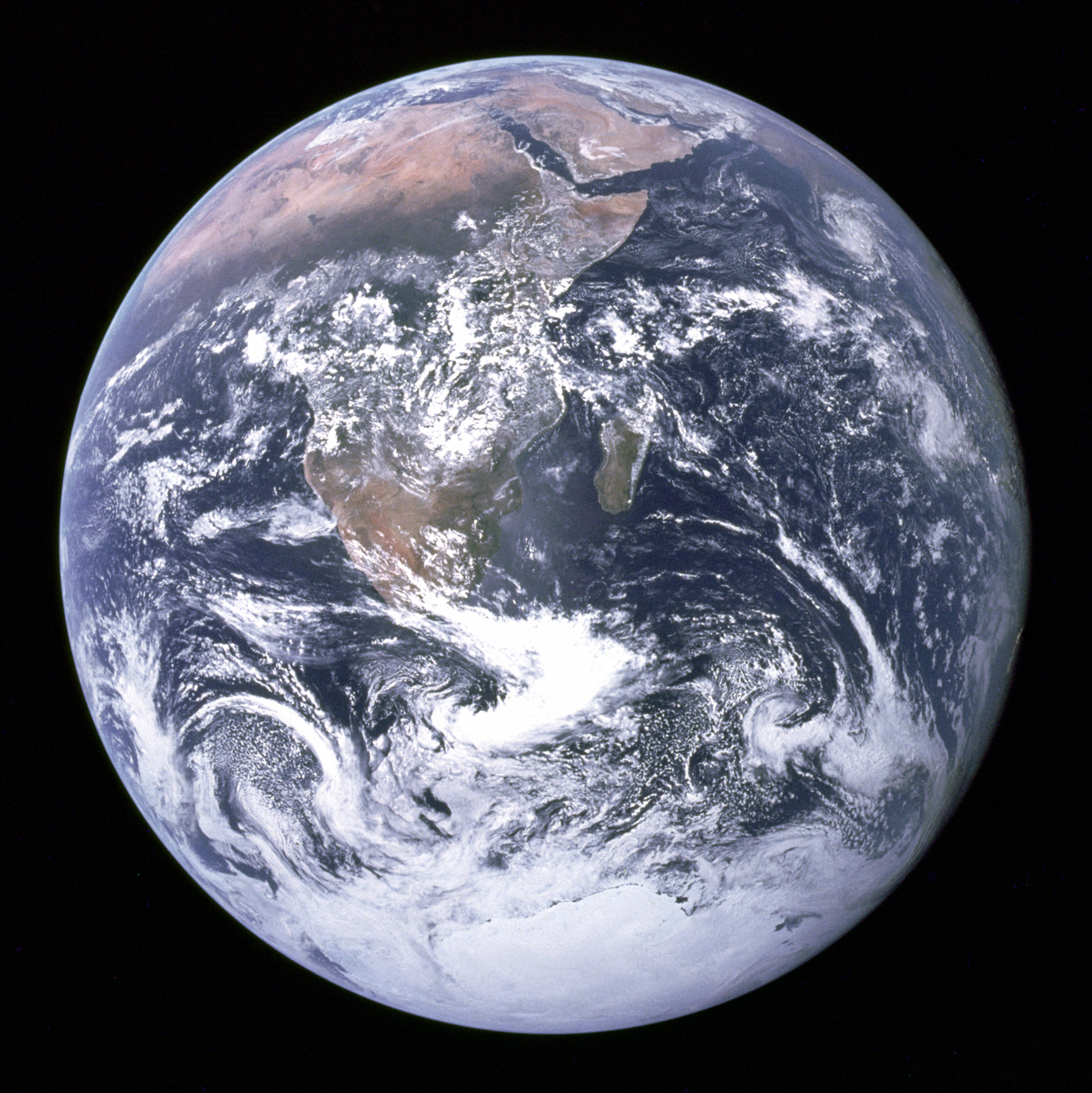

The Blue Marble

Figure: The Blue Marble, a photo of Earth taken from Apollo 17.

The Blue Marble image of the earth taken from Apollo 17 which became a symbol of the environmental movement. The photo was taken in December 1972. It gave us context. An ability to move beyond our observer bias and see us as we are. Isolated, fragile, and alone. Clinging to a rock orbiting the sun.

Eagle from Columbia

This picture, taken by Michael Collins aboard the Columbia command module, of Eagle’s return from the Moon, with the Earth in the background highlights the rendezvous radar used to to allow the crafts to dock.

See Lawrence (2024) Blue Marble (photograph) p. 367.

That radar was one sensing part of Eagle’s many automated systems (Mindell, 2008). These systems could have allowed Eagle to land without human intervention, but Armstrong chose to manually place Eagle in the Sea of Tranquility to avoid a boulder field.

The Apollo Guidance Computer mediated Armstrong’s commands, provided through a joystick, allowing him to control the craft when he input but not providing any direct connection between him and the thrusters.

Figure: Eagle photographed from Columbia on its return from the Lunar surface.

See Lawrence (2024) Apollo 11 p. 202-210.

Amelia Earhart

On 20th May 1932 Amelia Earhart took off in her Lockheed Vega from Harbour Grace in Canada. She called her plane the ‘Little Red Bus’. She travelled 2000 miles across open ocean, travelling through a storm, facing flames from her exhaust manifold, losing her altimeter. Flying until she sighted the Irish coast, tracking the coast until she saw a railway and following it until she saw a town, Londonderry. She landed nearby in a meadow.

Figure: The Little Red Bus, Amelia Earhart’s plane in Derry after landing.

Earhart had navigated initially by dead reckoning and then through vision. Her hand was directly connected to the control surfaces of her plane through a series of linkages and cables. She could literally feel the plane in flight, she could respond to it and her environment to control her direction and destiny.

See Lawrence (2024) Earhart, Amelia p. 200–205.

NACA Langley

The feel of an aircraft is a repeated theme in the early years of flight. In response to perceived European advances in flight in the First World War, the US introduced the National Advisory Committee on Aeronautics. Under the committee a proving ground for aircraft was formed at Langley Field in Virginia. During the Second World War Bob Gilruth published a report on the flying qualities of aircraft that characterised how this feel could be translated into numbers.

See Lawrence (2024) Gilruth, Bob p. 190-192.

Figure: 1945 photo of the NACA test pilots, from left Mel Gough, Herb Hoover, Jack Reeder, Stefan Cavallo and Bill Gray (photo NASA, NACA LMAL 42612)

See Lawrence (2024) National Advisory Committee on Aeronautics (NACA) p. 163–168. One of Gilruth’s collaborators suggested that

Hawker Hurricane airplane. A heavily armed fighter airplane noted for its role in the Battle of Britain, the Hurricane’s flying qualities were found to be generally satisfactory. The most notable deficiencies were heavy aileron forces at high speeds and large friction in the controls.

W. Hewitt Phillips1

and

Supermarine Spitfire airplane. A high-performance fighter noted for its role in the Battle of Britain and throughout WW II, the Spitfire had desirably light elevator control forces in maneuvers and near neutral longitudinal stability. Its greatest deficiency from the combat standpoint was heavy aileron forces and sluggish roll response at high speeds.

W. Hewitt Phillips2

Gilruth went beyond the reports of feel to characterise how the plane should respond to different inputs on the control stick. In other words he quantified that feel of the plane.

Gilrtuth’s work was in the spirit of Lord Kelvin’s quote on measurement

When you can measure what you are speaking about, and express it in numbers, you know something about it, when you cannot express it in numbers, your knowledge is of a meager and unsatisfactory kind; it may be the beginning of knowledge, but you have scarely, in your thoughts advanced to the stage of science.

From Chapter 3, pg 73 of Thomson (1889)

The aim was to convert a qualitative property of aircraft into quantitative measurement, thereby allowing their improvement.

The Atomic Human

Figure: The Atomic Eye, by slicing away aspects of the human that we used to believe to be unique to us, but are now the preserve of the machine, we learn something about what it means to be human.

The development of what some are calling intelligence in machines, raises questions around what machine intelligence means for our intelligence. The idea of the atomic human is derived from Democritus’s atomism.

In the fifth century bce the Greek philosopher Democritus posed a similar question about our physical universe. He imagined cutting physical matter into pieces in a repeated process: cutting a piece, then taking one of the cut pieces and cutting it again so that each time it becomes smaller and smaller. Democritus believed this process had to stop somewhere, that we would be left with an indivisible piece. The Greek word for indivisible is atom, and so this theory was called atomism. This book considers this question, but in a different domain, asking: As the machine slices away portions of human capabilities, are we left with a kernel of humanity, an indivisible piece that can no longer be divided into parts? Or does the human disappear altogether? If we are left with something, then that uncuttable piece, a form of atomic human, would tell us something about our human spirit.

See Lawrence (2024) atomic human, the p. 13.

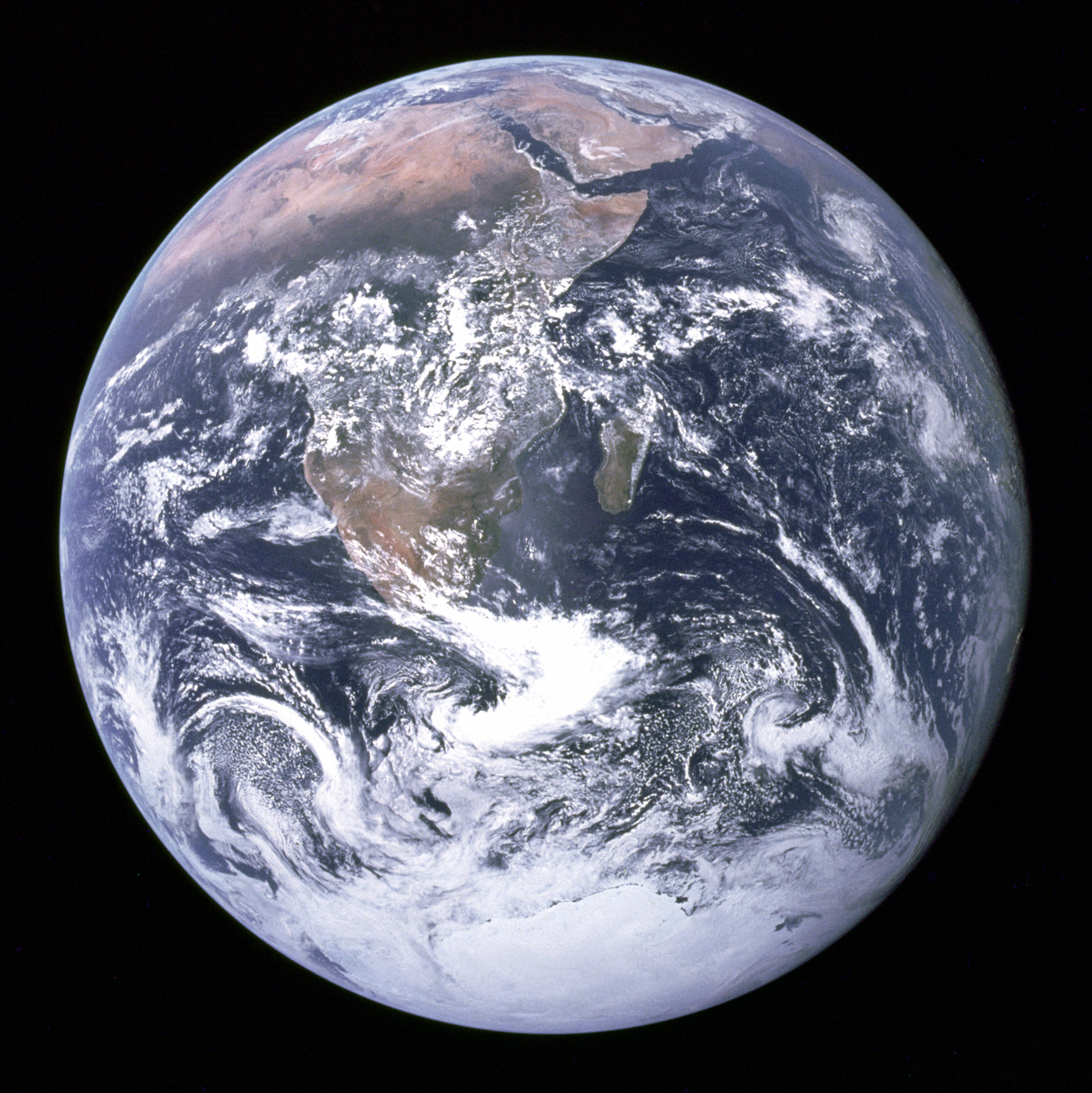

Figure: A Colossus Mark II codebreaking computer being operated by Dorothy Du Boisson (left) and Elsie Booker (right). Colossus was designed by Tommy Flowers, but programmed and operated by groups of Wrens based at Bletchley Park.

In the early hours of 1 June 1944, Tommy Flowers was wading ankle deep in water from a broken pipe, making the final connections to bring Mark 2 Colossus online. Colossus was the world’s first programmable, electronic, digital computer. Four days later, and Eisenhower was reading one of its first decrypts and ordering the invasion of Normandy. Flowers’s machine didn’t just launch an invasion, it launched an intellectual revolution.

See Lawrence (2024) Colossus (computer) p. 76–79, 91, 103, 108, 124, 130, 142–143, 149, 173–176, 199, 231–232, 251, 264, 267, 290, 380.

Embodiment Factors

| bits/min | billions | 2,000 |

|

billion calculations/s |

~100 | a billion |

| embodiment | 20 minutes | 5 billion years |

Figure: Embodiment factors are the ratio between our ability to compute and our ability to communicate. Relative to the machine we are also locked in. In the table we represent embodiment as the length of time it would take to communicate one second’s worth of computation. For computers it is a matter of minutes, but for a human, it is a matter of thousands of millions of years. See also “Living Together: Mind and Machine Intelligence” Lawrence (2017)

There is a fundamental limit placed on our intelligence based on our ability to communicate. Claude Shannon founded the field of information theory. The clever part of this theory is it allows us to separate our measurement of information from what the information pertains to.3

Shannon measured information in bits. One bit of information is the amount of information I pass to you when I give you the result of a coin toss. Shannon was also interested in the amount of information in the English language. He estimated that on average a word in the English language contains 12 bits of information.

Given typical speaking rates, that gives us an estimate of our ability to communicate of around 100 bits per second (Reed and Durlach, 1998). Computers on the other hand can communicate much more rapidly. Current wired network speeds are around a billion bits per second, ten million times faster.

When it comes to compute though, our best estimates indicate our computers are slower. A typical modern computer can process make around 100 billion floating-point operations per second, each floating-point operation involves a 64 bit number. So the computer is processing around 6,400 billion bits per second.

It’s difficult to get similar estimates for humans, but by some estimates the amount of compute we would require to simulate a human brain is equivalent to that in the UK’s fastest computer (Ananthanarayanan et al., 2009), the MET office machine in Exeter, which in 2018 ranked as the 11th fastest computer in the world. That machine simulates the world’s weather each morning, and then simulates the world’s climate in the afternoon. It is a 16-petaflop machine, processing around 1,000 trillion bits per second.

See Lawrence (2024) embodiment factor p. 13, 29, 35, 79, 87, 105, 197, 216-217, 249, 269, 353, 369.

New Flow of Information

Classically the field of statistics focused on mediating the relationship between the machine and the human. Our limited bandwidth of communication means we tend to over-interpret the limited information that we are given, in the extreme we assign motives and desires to inanimate objects (a process known as anthropomorphizing). Much of mathematical statistics was developed to help temper this tendency and understand when we are valid in drawing conclusions from data.

Figure: The trinity of human, data, and computer, and highlights the modern phenomenon. The communication channel between computer and data now has an extremely high bandwidth. The channel between human and computer and the channel between data and human is narrow. New direction of information flow, information is reaching us mediated by the computer. The focus on classical statistics reflected the importance of the direct communication between human and data. The modern challenges of data science emerge when that relationship is being mediated by the machine.

Data science brings new challenges. In particular, there is a very large bandwidth connection between the machine and data. This means that our relationship with data is now commonly being mediated by the machine. Whether this is in the acquisition of new data, which now happens by happenstance rather than with purpose, or the interpretation of that data where we are increasingly relying on machines to summarize what the data contains. This is leading to the emerging field of data science, which must not only deal with the same challenges that mathematical statistics faced in tempering our tendency to over interpret data but must also deal with the possibility that the machine has either inadvertently or maliciously misrepresented the underlying data.

Bandwidth Constrained Conversations

Figure: Conversation relies on internal models of other individuals.

Figure: Misunderstanding of context and who we are talking to leads to arguments.

Embodiment factors imply that, in our communication between humans, what is not said is, perhaps, more important than what is said. To communicate with each other we need to have a model of who each of us are.

To aid this, in society, we are required to perform roles. Whether as a parent, a teacher, an employee or a boss. Each of these roles requires that we conform to certain standards of behaviour to facilitate communication between ourselves.

Control of self is vitally important to these communications.

The high availability of data available to humans undermines human-to-human communication channels by providing new routes to undermining our control of self.

The consequences between this mismatch of power and delivery are to be seen all around us. Because, just as driving an F1 car with bicycle wheels would be a fine art, so is the process of communication between humans.

If I have a thought and I wish to communicate it, I first need to have a model of what you think. I should think before I speak. When I speak, you may react. You have a model of who I am and what I was trying to say, and why I chose to say what I said. Now we begin this dance, where we are each trying to better understand each other and what we are saying. When it works, it is beautiful, but when mis-deployed, just like a badly driven F1 car, there is a horrible crash, an argument.

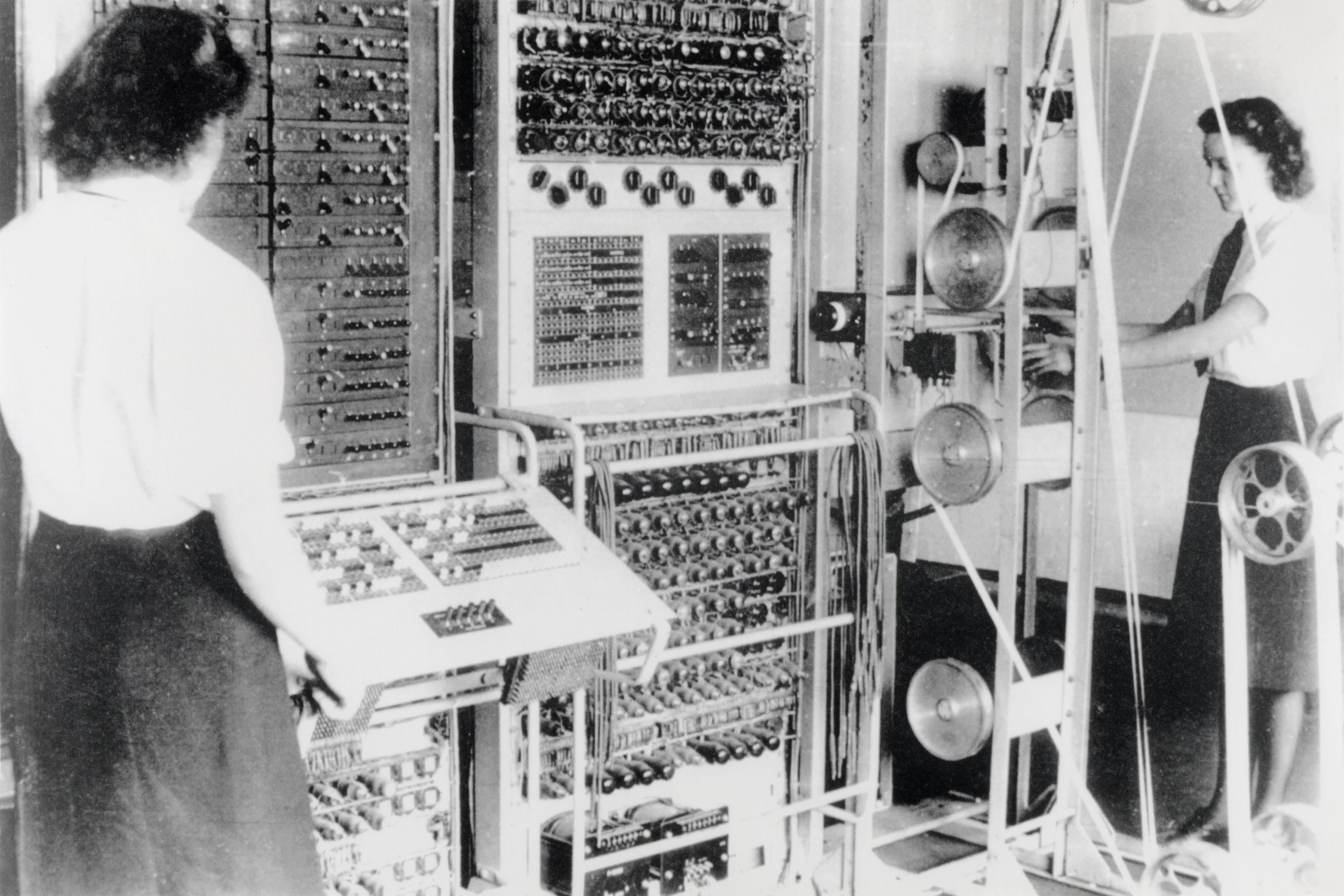

Sistine Chapel Ceiling

Shortly before I first moved to Cambridge, my girlfriend (now my wife) took me to the Sistine Chapel to show me the recently restored ceiling.

Figure: The ceiling of the Sistine Chapel.

When we got to Cambridge, we both attended Patrick Boyde’s talks on chapel. He focussed on both the structure of the chapel ceiling, describing the impression of height it was intended to give, as well as the significance and positioning of each of the panels and the meaning of the individual figures.

The Creation of Adam

Figure: Photo of Detail of Creation of Man from the Sistine chapel ceiling.

One of the most famous panels is central in the ceiling, it’s the creation of man. Here, God in the guise of a pink-robed bearded man reaches out to a languid Adam.

The representation of God in this form seems typical of the time, because elsewhere in the Vatican Museums there are similar representations.

Figure: Photo detail of God.

Photo from https://commons.wikimedia.org/wiki/File:Michelangelo,_Creation_of_Adam_04.jpg.

My colleague Beth Singler has written about how often this image of creation appears when we talk about AI (Singler, 2020).

See Lawrence (2024) Michelangelo, The Creation of Adam p. 7-9, 31, 91, 105–106, 121, 153, 206, 216, 350.

The way we represent this “other intelligence” in the figure of a Zeus-like bearded mind demonstrates our tendency to embody intelligences in forms that are familiar to us.

A Six Word Novel

Figure: Consider the six-word novel, apocryphally credited to Ernest Hemingway, “For sale: baby shoes, never worn”. To understand what that means to a human, you need a great deal of additional context. Context that is not directly accessible to a machine that has not got both the evolved and contextual understanding of our own condition to realize both the implication of the advert and what that implication means emotionally to the previous owner.

See Lawrence (2024) baby shoes p. 368.

But this is a very different kind of intelligence than ours. A computer cannot understand the depth of the Ernest Hemingway’s apocryphal six-word novel: “For Sale, Baby Shoes, Never worn”, because it isn’t equipped with that ability to model the complexity of humanity that underlies that statement.

Revolution

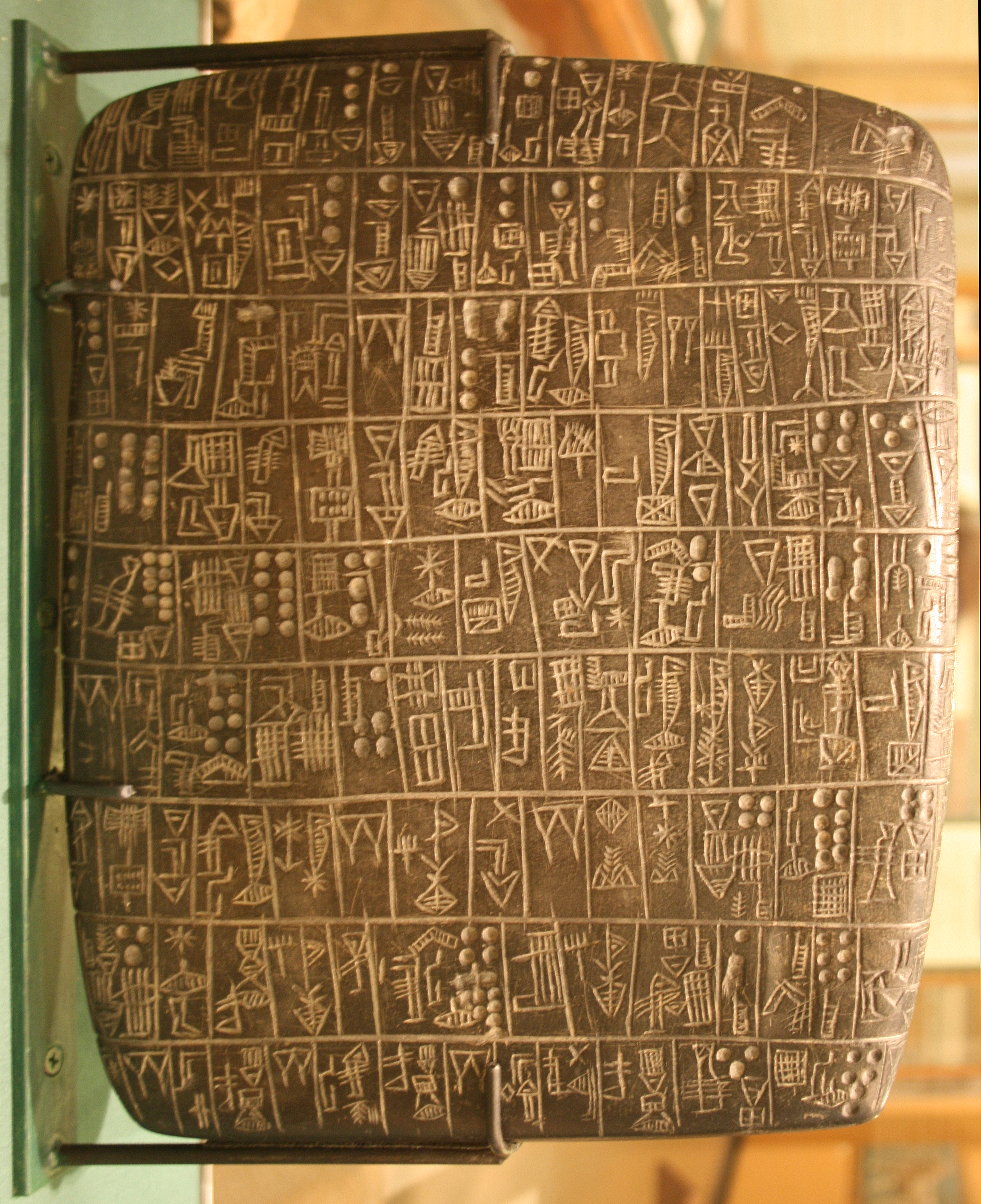

Arguably the information revolution we are experiencing is unprecedented in history. But changes in the way we share information have a long history. Over 5,000 years ago in the city of Uruk, on the banks of the Euphrates, communities which relied on the water to irrigate their corps developed an approach to recording transactions in clay. Eventually the system of recording system became sophisticated enough that their oral histories could be recorded in the form of the first epic: Gilgamesh.

See Lawrence (2024) cuneiform p. 337, 360, 390.

Figure: Chicago Stone, side 2, recording sale of a number of fields, probably from Isin, Early Dynastic Period, c. 2600 BC, black basalt

It was initially developed for people as a record of who owed what to whom, expanding individuals’ capacity to remember. But over a five hundred year period writing evolved to become a tool for literature as well. More pithily put, writing was invented by accountants not poets (see e.g. this piece by Tim Harford).

In some respects today’s revolution is different, because it involves also the creation of stories as well as their curation. But in some fundamental ways we can see what we have produced as another tool for us in the information revolution.

The Structure of Scientific Revolutions

Figure: The Structure of Scientific Revolutions by Thomas S. Kuhn suggests scientific paradigms are recorded in books.

Kuhn was a historian of science and a philosopher who suggested that the sociology of science has two principal components to it. His idea is that “normal science” operates within a paradigm That paradigm is defined by books which encode our best understanding. An example of a paradigm is Newtonian mechanics, or another example would be the geocentric view of the Universe. Within a paradigm normal science proceeds by scientists solving the “puzzles” that paradigm sets. A paradigm shift is when the paradigm changes, for example the Corpernican revolution or the introduction of relativity.

The notion of a paradigm shift has also entered common parlance, this reflects the idea that wider human knowledge is also shared and stored, less ormally than scientific knowledge, but still with a dependence on our information infrastructure.

The digital computer has brought a fundamental change in the nature of that information infrastructure. By moving information faster the modern information infrastructure is dominated not by the book, but by the machine. This brings challenges for managing and controlling this information infrastructure.

See Lawrence (2024) Kuhn, Thomas: The Structure of Scientific Revolutions p. 295–299.

Blake’s Newton

William Blake’s rendering of Newton captures humans in a particular state. He is trance-like absorbed in simple geometric shapes. The feel of dreams is enhanced by the underwater location, and the nature of the focus is enhanced because he ignores the complexity of the sea life around him.

Figure: William Blake’s Newton. 1795c-1805

See Lawrence (2024) Blake, William Newton p. 121–123.

The caption in teh Tate Britain reads:

Here, Blake satirises the 17th-century mathematician Isaac Newton. Portrayed as a muscular youth, Newton seems to be underwater, sitting on a rock covered with colourful coral and lichen. He crouches over a diagram, measuring it with a compass. Blake believed that Newton’s scientific approach to the world was too reductive. Here he implies Newton is so fixated on his calculations that he is blind to the world around him. This is one of only 12 large colour prints Blake made. He seems to have used an experimental hybrid of printing, drawing, and painting.

From the Tate Britain

See Lawrence (2024) Blake, William Newton p. 121–123, 258, 260, 283, 284, 301, 306.

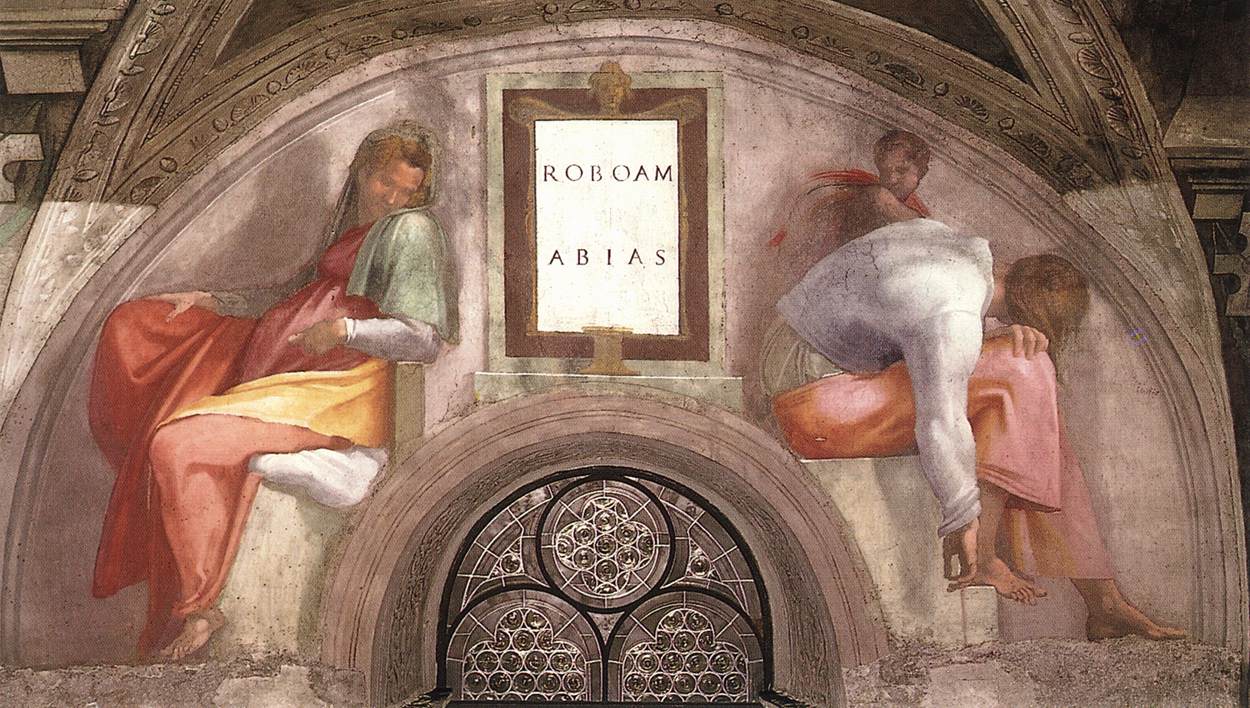

Lunette Rehoboam Abijah

Many of Blake’s works are inspired by engravings he saw of the Sistine chapel ceiling. The pose of Newton is taken from the Lunette depiction of Abijah, one of the Michelangeo’s ancestors of Christ.

Figure: Lunette containing Rehoboam and Abijah.

People, Communication and Culture

Human intelligence is a social intelligence: it is isolated, but it doesn’t exist in isolation. It sits instead within a broader culture. The term ‘culture’ originates from the Roman orator Cicero, who wrote of cultura animi, the cultivation of our minds. He drew an analogy with agriculture: human knowledge is cultivated similarly to the way crops are raised from the land: our minds grow and respond to their intellectual environment.

It is impossible to understand humans without understanding our context, and human culture is a key part of that context, but the cul- ture that sustains our minds is also evolving. Over time, how we see ourselves within the universe has changed. The world looks different to us today than it did to Michelangelo. Many of these changes have improved our ability to understand the world around us.

Figure: People communicate through artifacts and culture.

See Lawrence (2024) Cicero and culture p. 20-21.

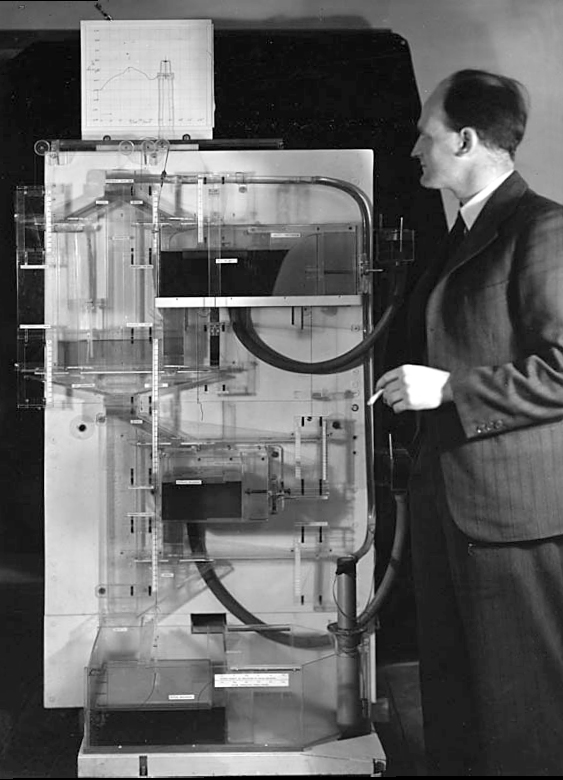

The MONIAC

The MONIAC was an analogue computer designed to simulate the UK economy. Analogue comptuers work through analogy, the analogy in the MONIAC is that both money and water flow. The MONIAC exploits this through a system of tanks, pipes, valves and floats that represent the flow of money through the UK economy. Water flowed from the treasury tank at the top of the model to other tanks representing government spending, such as health and education. The machine was initially designed for teaching support but was also found to be a useful economic simulator. Several were built and today you can see the original at Leeds Business School, there is also one in the London Science Museum and one in the Unisversity of Cambridge’s economics faculty.

Figure: Bill Phillips and his MONIAC (completed in 1949). The machine is an analogue computer designed to simulate the workings of the UK economy.

See Lawrence (2024) MONIAC p. 232-233, 266, 343.

Human Analogue Machine

Recent breakthroughs in generative models, particularly large language models, have enabled machines that, for the first time, can converse plausibly with other humans.

The Apollo guidance computer provided Armstrong with an analogy when he landed it on the Moon. He controlled it through a stick which provided him with an analogy. The analogy is based in the experience that Amelia Earhart had when she flew her plane. Armstrong’s control exploited his experience as a test pilot flying planes that had control columns which were directly connected to their control surfaces.

Figure:

The generative systems we have produced do not provide us with the “AI” of science fiction. Because their intelligence is based on emulating human knowledge. Through being forced to reproduce our literature and our art they have developed aspects which are analogous to the cultural proxy truths we use to describe our world.

These machines are to humans what the MONIAC was the British economy. Not a replacement, but an analogue computer that captures some aspects of humanity while providing advantages of high bandwidth of the machine.

HAM

The Human-Analogue Machine or HAM therefore provides a route through which we could better understand our world through improving the way we interact with machines.

Figure: The trinity of human, data, and computer, and highlights the modern phenomenon. The communication channel between computer and data now has an extremely high bandwidth. The channel between human and computer and the channel between data and human is narrow. New direction of information flow, information is reaching us mediated by the computer. The focus on classical statistics reflected the importance of the direct communication between human and data. The modern challenges of data science emerge when that relationship is being mediated by the machine.

The HAM can provide an interface between the digital computer and the human allowing humans to work closely with computers regardless of their understandin gf the more technical parts of software engineering.

Figure: The HAM now sits between us and the traditional digital computer.

Of course this route provides new routes for manipulation, new ways in which the machine can undermine our autonomy or exploit our cognitive foibles. The major challenge we face is steering between these worlds where we gain the advantage of the computer’s bandwidth without undermining our culture and individual autonomy.

See Lawrence (2024) human-analogue machine (HAMs) p. 343-347, 359-359, 365-368.

Atrophy and Cognitive Flattening

Even if we calibrate these tools correctly so that they do represent the world appropriately we are at risk. The hippocampus is part of our brain’s cortex, oOne role of our hippocampus is in navigation, knowing how to move from one place to another.

Figure: The hippocampus within the brain. Image generated from Anatomography, https://lifesciencedb.jp/.

Our prefrontal cortex, the size of which differentiates us from other primates and mammals, is the seat of our planning and of our higher intelligence. It fires in sympathy with our hippocampus.

By becoming overreliant on HAMs and there interface to the digital computer are we at risk of suffering from the same deskilling we perceive in our navigational skills? Does this place us at risk of sleepwalking into a world that is managed by the machine even as we believe that we are managing it ourselves?

Fall and Expulsion from Garden of Eden

In the Sistine Chapel, two panels below Michelangelo’s depiction of the creation is his rendering of The Fall and Expulsion from Paradise. Adam and Eve initially exist in Eden; their lives are entirely managed for them. They are warned not to eat from the tree of knowledge. When they do so they become self-aware and are expelled from the garden.

Michelangelo’s picture depicts the pain of this expulsuion, the idea that they will now be subjected to the miseries of the world outside – disease and suffering. But the story can also be viewed as a transformation from beings who are fully subject to God’s will to beings who determine their own future. Under this view, the fall is actually a moment of creation. Creation of beings with free will.

Figure: Photo of detail of the fall and expulsion from the Garden of Eden.

One of the promises of artificial intelligence is that by increasing its involvement in our lives it will be able to cater for our needs. By giving the machine more access to our data, the idea is that it will be able to alleviate that suffering and cure our diseases – the machine will look after us. In the western tradition the forbidden fruit is an apple, and in today’s world it could be our Apple computers that guide us back into the walled garden of Eden. Already our Apple watches can monitor our heart rates and inform us of the number of steps we need to take to maintain our health. But as we cede control to the machine, we are also losing what Adam and Eve gained by eating from the tree. We lose personal responsibility and freedom of choice. The Garden of Eden is depicted as a benevolent autocracy, but such an autocracy necessarily limits the freedoms of the indi- viduals within it. There is no one-size-fits-all set of values. Each of us will react differently to whether this price is worth the outcome, and our ideas will change over time. How much we each want to be constrained by a benevolent overseer may differ when we are faced with severe illness rather than being youthful and healthy. If we respect the dignity of the individual human, we need to accommodate this diversity of perspectives.

But we need to be active in our protection of individual dignity if we don’t want to sleep walk back into an implicit autocracy managed through the reflected understanding that is stored in the HAMs.

See Lawrence (2024) Garden of Eden p. 350-351.

Elohim Creating Adam

Blake’s vision of the creation of man, known as Elohim Creating Adam, is a strong contrast to Michelangelo’s. The faces of both God and Adam show deep anguish. The image is closer to representations of Prometheus receiving his punishment for sharing his knowledge of fire than to the languid ecstasy we see in Michelangelo’s representation.

Figure: William Blake’s Elohim Creating Adam.

The caption in the Tate reads:

Elohim is a Hebrew name for God. This picture illustrates the Book of Genesis: ‘And the Lord God formed man of the dust of the ground’. Adam is shown growing out of the earth, a piece of which Elohim holds in his left hand.

For Blake the God of the Old Testament was a false god. He believed the Fall of Man took place not in the Garden of Eden, but at the time of creation shown here, when man was dragged from the spiritual realm and made material.

From the Tate Britain

Blake’s vision is demonstrating the frustrations we experience when the (complex) real world doesn’t manifest in the way we’d hoped.

See Lawrence (2024) Blake, William Elohim Creating Adam p. 121, 217–18.

Bandwidth vs Complexity

The computer communicates in Gigabits per second, One way of imagining just how much slower we are than the machine is to look for something that communicates in nanobits per second.

|

|||

| bits/min | \(100 \times 10^{-9}\) | \(2,000\) | \(600 \times 10^9\) |

Figure: When we look at communication rates based on the information passing from one human to another across generations through their genetics, we see that computers watching us communicate is roughly equivalent to us watching organisms evolve. Estimates of germline mutation rates taken from Scally (2016).

Figure: Bandwidth vs Complexity.

The challenge we face is that while speed is on the side of the machine, complexity is on the side of our ecology. Many of the risks we face are associated with the way our speed undermines our ecology and the machines speed undermines our human culture.

See Lawrence (2024) Human evolution rates p. 98-99. See Lawrence (2024) Psychological representation of Ecologies p. 323-327.

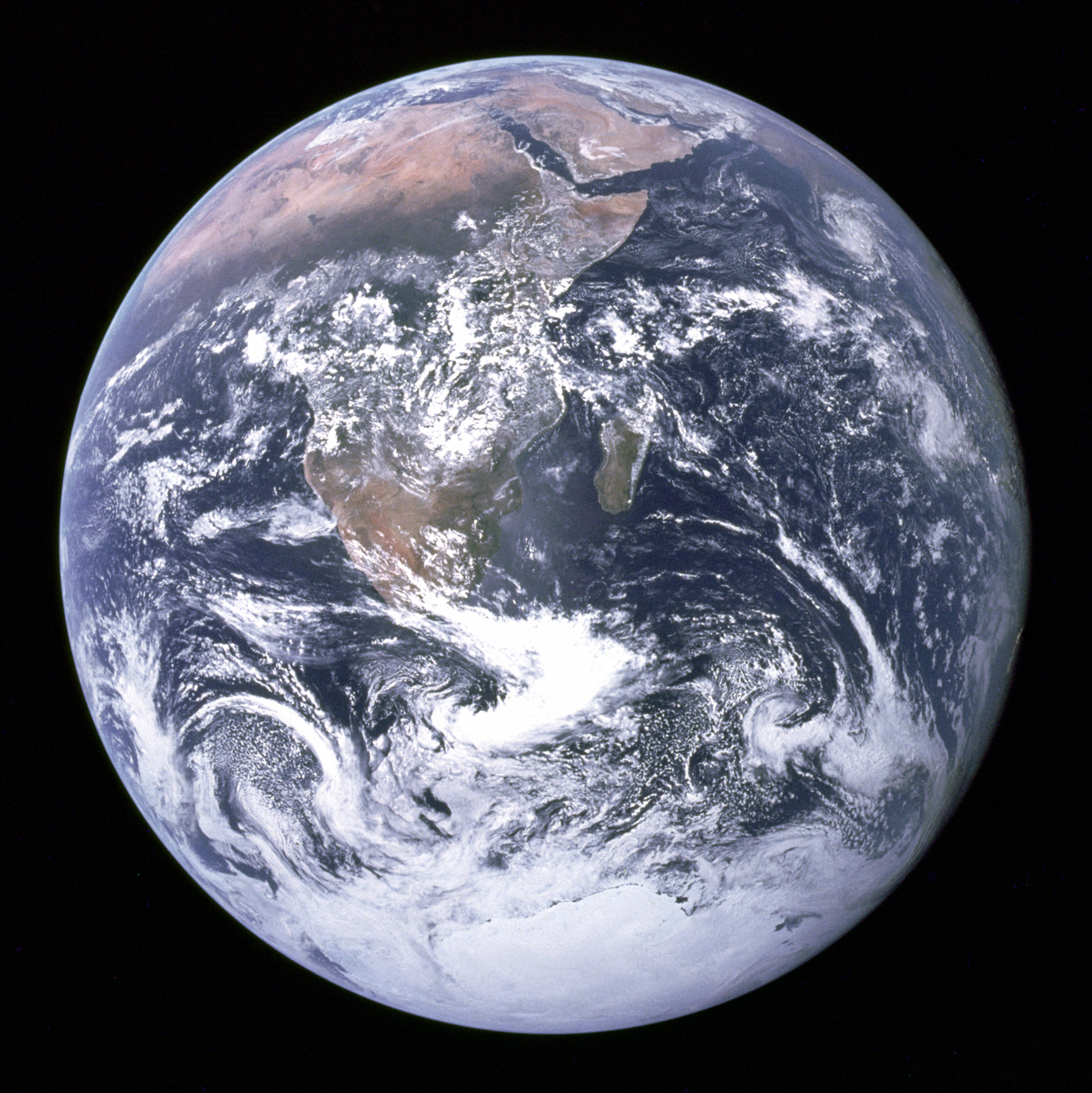

Figure: The Blue Marble, a photo of Earth taken from Apollo 17.

Conclusion

We are in the midst of a cognitive Corpenican revolution that is as significant as the cosmological Copernican revolution. A combination of observer bias and self-serving bias have caused some to place our intelligence at the centre of the cogntiive universe. In fact our intelligence is a consequence of our context. It reflects our limitations and our vulnerabilities. It is to be treasured not because it has objective primacy over other forms of information processing but because, like our ecology, it is irreplaceable and remarkable for what it is in itself.

We’re at a moment of steerage, and one where the international conversation around artificial intelligence has flattened the complexity of the human experience into a comical cartoon of what it means for us to exist as individuals and as a species. We need to push back against that reject the technobabble and put the human back at the centre of these conversations.

Thanks!

For more information on these subjects and more you might want to check the following resources.

- book: The Atomic Human

- twitter: @lawrennd

- podcast: The Talking Machines

- newspaper: Guardian Profile Page

- blog: http://inverseprobability.com