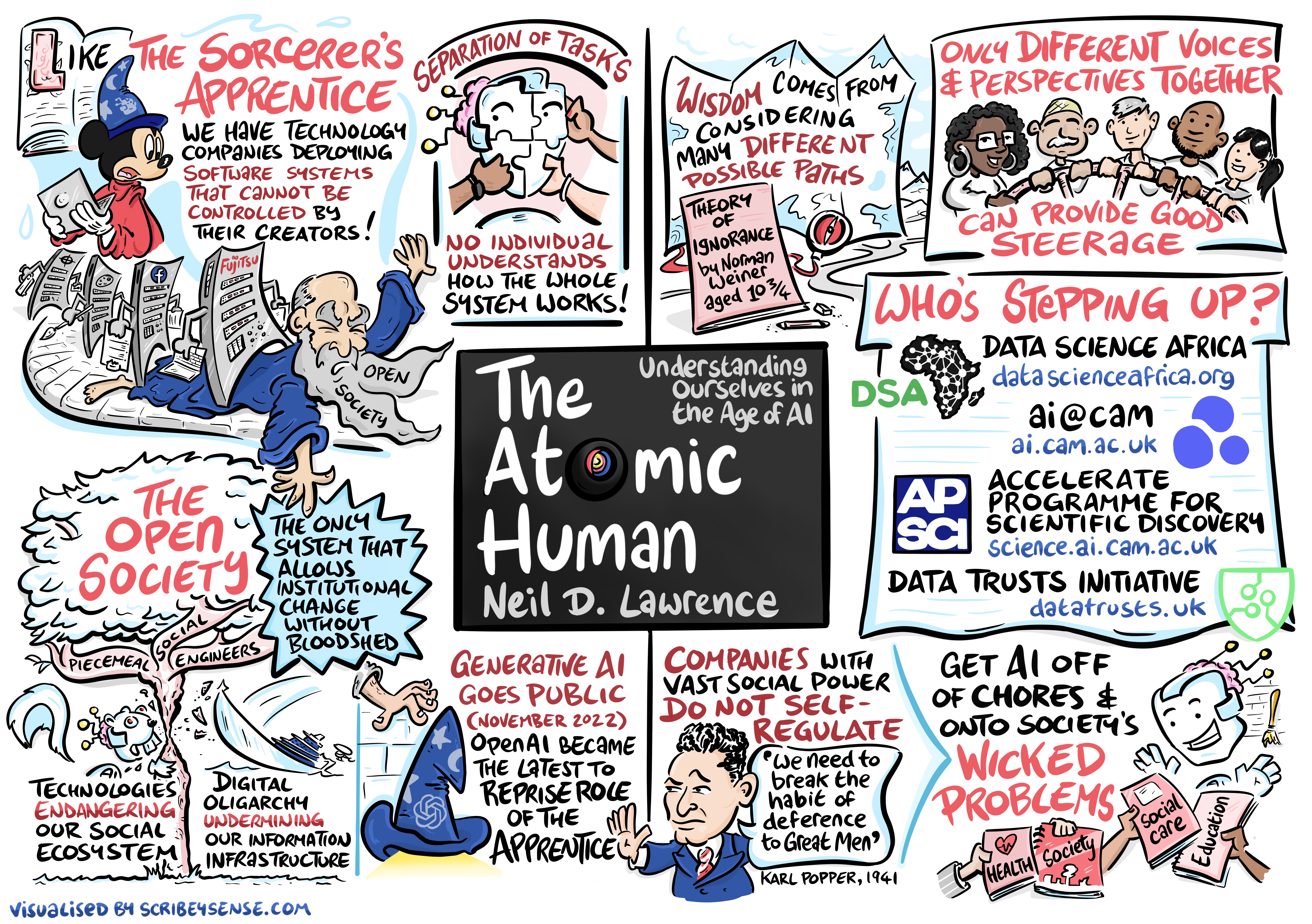

The Atomic Human

Links

Abstract

As artificial intelligence advances from language models to agentic systems with economic agency, central banks and financial institutions face fundamental questions about how these technologies reshape economic behavior, market dynamics, and human capital. This talk explores themes from “The Atomic Human,” examining why attempts to measure and optimize human capital through AI risk creating new productivity paradoxes, and what this means for economic modeling and monetary policy.

Drawing on innovation economics and information theory, we examine how the attention economy operates as a new form of capital accumulation, why traditional market mechanisms struggle to map AI capabilities to societal needs, and how central banks might navigate an economy where the boundaries between human and machine intelligence increasingly blur.

Artificial General Vehicle

Artificial General Vehicle

Figure: The notion of artificial general intelligence is as absurd as the notion of an artificial general vehicle - no single vehicle is optimal for every journey. (Illustration by Dan Andrews inspired by a conversation about “The Atomic Human” Lawrence (2024))

This illustration was created by Dan Andrews inspired by a conversation about “The Atomic Human” book. The drawing emerged from discussions with Dan about the flawed concept of artificial general intelligence and how it parallels the absurd idea of a single vehicle optimal for all journeys. The vehicle itself is inspired by shared memories of Professor Pat Pending in Hanna Barbera’s Wacky Races.

I often turn up to talks with my Brompton bicycle. Embarrassingly I even took it to Google which is only a 30 second walk from King’s Cross station. That made me realise it’s become a sort of security blanket. I like having it because it’s such a flexible means of transport.

But is the Brompton an “artificial general vehicle?” A vehicle that can do everything? Unfortunately not, for example it’s not very good for flying to the USA. There is no artificial general vehicle that is optimal for every journey. Similarly there is no such thing as artificial general intelligence. The idea is artificial general nonsense.

That doesn’t mean there aren’t different principles to intelligence we can look at. Just like vehicles have principles that apply to them. When designing vehicles we need to think about air resistance, friction, power. We have developed solutions such as wheels, different types of engines and wings that are deployed across different vehicles to achieve different results.

Intelligence is similar. The notion of artificial general intelligence is fundamentally eugenic. It builds on Spearman’s term “general intelligence” which is part of a body of literature that was looking to assess intelligence in the way we assess height. The objective then being to breed greater intelligences (Lyons, 2022).

Philosopher’s Stone

Figure: The Alchemist by Joseph Wright of Derby (1771). The picture depicts Hennig Brand discovering the element phosphorus when searching for the Philosopher’s Stone.

The philosopher’s stone is a mythical substance that can convert base metals to gold.

In our modern economy, automation has the same effect as the philosopher’s stone. During the industrial revolution, steel and steam replaced human manual labour. Today, silicon and electrons are being combined to replace human mental labour.

In our modern economy, automation has the same effect as the philosopher’s stone. During the industrial revolution, steel and steam replaced human manual labour. Today, silicon and electrons are being combined to replace human mental labour.

The Attention Economy

Human intelligence is locked-in. It’s bandwidth restricted. This makes it a bottleneck in the attention economy.

Herbert Simon on Information

What information consumes is rather obvious: it consumes the attention of its recipients. Hence a wealth of information creates a poverty of attention …

Simon (1971)

The attention economy was a phenomenon described in 1971 by the American computer scientist Herbert Simon. He saw the coming information revolution and wrote that a wealth of information would create a poverty of attention. Too much information means that human attention becomes the scarce resource, the bottleneck. It becomes the gold in the attention economy.

The power associated with control of information dates back to the invention of writing. By pressing reeds into clay tablets Sumerian scribes stored information and controlled the flow of information.

Figure: This is the drawing Dan was inspired to create for Chapter 9. It captures the core idea in the Great AI Fallacy, that over time it has been us adapting to the machine rather than the machine adapting to us.

See blog post on The Great AI Fallacy..

The Great AI Fallacy is the idea that the machine can adapt and respond to us, when in reality we find that it is us that have to adapt to the machine.

Human Capital Index

The World Bank’s human capital index is one area where many European countries are leading international economy, or at least an area where they currently outperform both the USA and China. The index is a measure of education and health of a population.

Technological progress disrupts existing systems. A new social contract is needed to smooth the transition and guard against rising inequality. Significant investments in human capital throughout a person’s lifecycle are vital to this effort.

World Bank (2019)]

In the 2020 version of the index, the UK was ranked 11th, Italy 30th, US 35th and China 45th.

Productivity Flywheel

Figure: The productivity flywheel suggests technical innovation is reinvested.

The productivity flywheel should return the gains released by productivity through funding. This relies on the economic value mapping the underlying value.

Inflation of Human Capital

This transformation creates efficiency. But it also devalues the skills that form the backbone of human capital and create a happy, healthy society. Had the alchemists ever discovered the philosopher’s stone, using it would have triggered mass inflation and devalued any reserves of gold. Similarly, our reserve of precious human capital is vulnerable to automation and devaluation in the artificial intelligence revolution. The skills we have learned, whether manual or mental, risk becoming redundant in the face of the machine.

Inflation Proof Human Capital

Will AI totally displace the human? Or is there any form, a core, an irreducible element of human attention that the machine cannot replace? If so, this would be a robust foundation on which to build our digital futures.

Uncertainty Principle

Unfortunately, when we seek it out, we are faced with a form of uncertainty principle. Machines rely on measurable outputs, meaning any aspect of human ability that can be quantified is at risk of automation. But the most essential aspects of humanity are the hardest to measure.

So, the closer we get to the atomic human the more difficult it is to measure the value of the associated human attention.

Homo Atomicus

We won’t find the atomic human in the percentage of A grades that our children are achieving at schools or the length of waiting lists we have in our hospitals. It sits behind all this. We see the atomic human in the way a nurse spends an extra few minutes ensuring a patient is comfortable or a bus driver pauses to allow a pensioner to cross the road or a teacher praises a struggling student to build their confidence.

We need to move away from homo economicus towards homo atomicus.

New Productivity Paradox

Thus we face a new productivity paradox. The classical tools of economic intervention cannot map hard-to-measure supply and demand of quality human attention. So how do we build a new economy that utilises our lead in human capital and delivers the digital future we aspire to?

One answer is to look at the human capital index. This measures the quality and quantity of the attention economy via the health and education of our population.

We need to value this and find a way to reinvest human capital, returning the value of the human back into the system when considering productivity gains from technology like AI.

This means a tighter mapping between what the public want and what the innovation economy delivers. It means more agile policy that responds to public dialogue with tangible solutions co-created with the people who are doing the actual work. It means, for example, freeing up a nurse’s time with technology tools and allowing them to spend that time with patients.

To deliver this, our academic institutions need to step up. Too often in the past we have been distant from the difficulties that society faces. We have been too remote from the real challenges of everyday lives — challenges that don’t make the covers of prestige science magazines. People are rightly angry that innovations like AI have yet to address the problems they face, including in health, social care and education.

Of course, universities cannot fix this on their own, but academics can operate as honest brokers that bridge gaps between public and private considerations, convene different groups and understand, celebrate and empower the contributions of individuals.

This requires people who are prepared to dedicate their time to improving each other’s lives, developing new best practices and sharing them with colleagues and coworkers.

To preserve our human capital and harness our potential, we need the AI alchemists to provide us with solutions that can serve both science and society.

Supply Chain of Ideas

Model is “supply chain of ideas” framework, particularly in the context of information technology and AI solutions like machine learning and large language models. You suggest that this idea flow, from creation to application, is similar to how physical goods move through economic supply chains.

In the realm of IT solutions, there’s been an overemphasis on macro-economic “supply-side” stimulation - focusing on creating new technologies and ideas - without enough attention to the micro-economic “demand-side” - understanding and addressing real-world needs and challenges.

Imagining the supply chain rather than just the notion of the Innovation Economy allows the conceptualisation of the gaps between macro and micro economic issues, enabling a different way of thinking about process innovation.

Phrasing things in terms of a supply chain of ideas suggests that innovation requires both characterisation of the demand and the supply of ideas. This leads to four key elements:

- Multiple sources of ideas (diversity)

- Efficient delivery mechanisms

- Quick deployment capabilities

- Customer-driven prioritization

The next priority is mapping the demand for ideas to the supply of ideas. This is where much of our innovation system is failing. In supply chain optimisaiton a large effort is spent on understanding current stock and managing resources to bring the supply to map to the demand. This includes shaping the supply as well as managing it.

The objective is to create a system that can generate, evaluate, and deploy ideas efficiently and effectively, while ensuring that people’s needs and preferences are met. The customer here depends on the context - it could be the public, it could be a business, it could be a government department but very often it’s individual citizens. The loss of their voice in the innovation economy is a trigger for the gap between the innovation supply (at a macro level) and the innovation demand (at a micro level).

AI cannot replace atomic human

Figure: Opinion piece in the FT that describes the idea of a social flywheel to drive the targeted growth we need in AI innovation.

Attention Reinvestment Cycle

Figure: The attention flywheel focusses on reinvesting human capital.

While the traditional productivity flywheel focuses on reinvesting financial capital, the attention flywheel focuses on reinvesting human capital - our most precious resource in an AI-augmented world. This requires deliberately creating systems that capture the value of freed attention and channel it toward human-centered activities that machines cannot replicate.

Figure: Packhorse Bridge under Burbage Edge. This packhorse route climbs steeply out of Hathersage and heads towards Sheffield. Packhorses were the main route for transporting goods across the Peak District. The high cost of transport is one driver of the ‘smith’ model, where there is a local skilled person responsible for assembling or creating goods (e.g. a blacksmith).

On Sunday mornings in Sheffield, I often used to run across Packhorse Bridge in Burbage valley. The bridge is part of an ancient network of trails crossing the Pennines that, before Turnpike roads arrived in the 18th century, was the main way in which goods were moved. Given that the moors around Sheffield were home to sand quarries, tin mines, lead mines and the villages in the Derwent valley were known for nail and pin manufacture, this wasn’t simply movement of agricultural goods, but it was the infrastructure for industrial transport.

The profession of leading the horses was known as a Jagger and leading out of the village of Hathersage is Jagger’s Lane, a trail that headed underneath Stanage Edge and into Sheffield.

The movement of goods from regions of supply to areas of demand is fundamental to our society. The physical infrastructure of supply chain has evolved a great deal over the last 300 years.

Cromford

Figure: Richard Arkwright is regarded of the founder of the modern factory system. Factories exploit distribution networks to centralize production of goods. Arkwright located his factory in Cromford due to proximity to Nottingham Weavers (his market) and availability of water power from the tributaries of the Derwent river. When he first arrived there was almost no transportation network. Over the following 200 years The Cromford Canal (1790s), a Turnpike (now the A6, 1816-18) and the High Peak Railway (now closed, 1820s) were all constructed to improve transportation access as the factory blossomed.

Richard Arkwright is known as the father of the modern factory system. In 1771 he set up a Mill for spinning cotton yarn in the village of Cromford, in the Derwent Valley. The Derwent valley is relatively inaccessible. Raw cotton arrived in Liverpool from the US and India. It needed to be transported on packhorse across the bridleways of the Pennines. But Cromford was a good location due to proximity to Nottingham, where weavers where consuming the finished thread, and the availability of water power from small tributaries of the Derwent river for Arkwright’s water frames which automated the production of yarn from raw cotton.

By 1794 the Cromford Canal was opened to bring coal in to Cromford and give better transport to Nottingham. The construction of the canals was driven by the need to improve the transport infrastructure, facilitating the movement of goods across the UK. Canals, roads and railways were initially constructed by the economic need for moving goods. To improve supply chain.

The A6 now does pass through Cromford, but at the time he moved there there was merely a track. The High Peak Railway was opened in 1832, it is now converted to the High Peak Trail, but it remains the highest railway built in Britain.

Cooper (1991)

Distretti Industriali

Some inspiration can be found in historical industrial regions like 18th Century Emilia Romagna, The Black Country or the Sheffield steel making region. Interconnected dependencies, local communities and talent development. Craft culture being shared.

Wicked Problems

Figure: Society faces many wicked problems in health, education, security, and social care that require carefully deploying AI toward meaningful societal challenges rather than focusing on commercially appealing applications. (Illustration by Dan Andrews inspired by the Epilogue of “The Atomic Human” Lawrence (2024))

This illustration was created by Dan Andrews after reading the Epilogue of “The Atomic Human” book. The Epilogue discusses how we might deploy AI to address society’s most pressing challenges, and Dan’s drawing captures the various wicked problems we face and some of the initiatives that are looking to address them.

See blog post on Who is Stepping Up?.

Example: Cambridge Approach

ai@cam is the flagship University mission that seeks to address these challenges. It recognises that development of safe and effective AI-enabled innovations requires this mix of expertise from across research domains, businesses, policy-makers, civil society, and from affected communities. AI@Cam is setting out a vision for AI-enabled innovation that benefits science, citizens and society.

ai@cam

The ai@cam vision is being achieved in a manner that is modelled on other grass roots initiatives like Data Science Africa. Through leveraging the University’s vibrant interdisciplinary research community. ai@cam has formed partnerships between researchers, practitioners, and affected communities that embed equity and inclusion. It is developing new platforms for innovation and knowledge transfer. It is delivering innovative interdisciplinary teaching and learning for students, researchers, and professionals. It is building strong connections between the University and national AI priorities.

We are working across the University to empower the diversity of expertise and capability we have to focus on these broad societal problems. In April 2022 we shared an ai@cam with a vision document that outlines these challenges for the University.

The University operates as both an engine of AI-enabled innovation and steward of those innovations.

AI is not a universal remedy. It is a set of tools, techniques and practices that correctly deployed can be leveraged to deliver societal benefit and mitigate social harm.

The initiative was funded in November 2022 where a £5M investment from the University.

The progress made so far has been across the University community. We have successfully engaged with over members spanning more than 30 departments and institutes, bringing together academics, researchers, start-ups, and large businesses to collaborate on AI initiatives. The program has already supported 6 new funding bids and launched five interdisciplinary A-Ideas projects that bring together diverse expertise to tackle complex challenges. The establishment of the Policy Lab has created a crucial bridge between research and policy-making. Additionally, through the Pioneer program, we have initiated 46 computing projects that are helping to build our technical infrastructure and capabilities.

How ai@cam is Addressing Innovation Challenges

1. Bridging Macro and Micro Levels

Challenge: There is often a disconnect between high-level AI research and real-world needs that must be addressed.

The A-Ideas Initiative represents an effort to bridge this gap by funding interdisciplinary projects that span 19 departments across 6 schools. This ensures diverse perspectives are brought to bear on pressing challenges. Projects focusing on climate change, mental health, and language equity demonstrate how macro-level AI capabilities can be effectively applied to micro-level societal needs.

Challenge: Academic insights often fail to translate into actionable policy changes.

The Policy Lab initiative addresses this by creating direct connections between researchers, policymakers, and the public, ensuring academic insights can influence policy decisions. The Lab produces accessible policy briefs and facilitates public dialogues. A key example is the collaboration with the Bennett Institute and Minderoo Centre, which resulted in comprehensive policy recommendations for AI governance.

2. Addressing Data, Compute, and Capability Gaps

Challenge: Organizations struggle to balance data accessibility with security and privacy concerns.

The data intermediaries initiative establishes trusted entities that represent the interests of data originators, helping to establish secure and ethical frameworks for data sharing and use. Alongside approaches for protecting data we need to improve our approach to processing data. Careful assessment of data quality and organizational data maturity ensures that data can be shared and used effectively. Together these approaches help to ensure that data can be used to serve science, citizens and society.

2. Addressing data, Compute and Capability Gaps

Challenge: Many researchers lack access to necessary computational resources for modern research.

The HPC Pioneer Project addresses this by providing access to the Dawn supercomputer, enabling 46 diverse projects across 20 departments to conduct advanced computational research. This democratization of computing resources ensures that researchers from various disciplines can leverage high-performance computing for their work. The ai@cam project also supports the ICAIN initiative, further strengthening the computational infrastructure available to researchers with a particular focus on emerging economies.

Challenge: There is a significant skills gap in applying AI across different academic disciplines.

The Accelerate Programme for Scientific Discovery addresses this through a comprehensive approach to building AI capabilities. Through a tiered training system that ranges from basic to advanced levels, the programme ensures that domain experts can develop the AI skills relevant to their field. The initiative particularly emphasizes peer-to-peer learning creating sustainable communities of practice where researchers can share knowledge and experiences through “AI Clubs.”

The Accelerate Programme

Figure: The Accelerate Programme for Scientific Discovery covers research, education and training, engagement. Our aim is to bring about a step change in scientific discovery through AI. http://science.ai.cam.ac.uk

We’re now in a new phase of the development of computing, with rapid advances in machine learning. But we see some of the same issues – researchers across disciplines hope to make use of machine learning, but need access to skills and tools to do so, while the field machine learning itself will need to develop new methods to tackle some complex, ‘real world’ problems.

It is with these challenges in mind that the Computer Lab has started the Accelerate Programme for Scientific Discovery. This new Programme is seeking to support researchers across the University to develop the skills they need to be able to use machine learning and AI in their research.

To do this, the Programme is developing three areas of activity:

- Research: we’re developing a research agenda that develops and applies cutting edge machine learning methods to scientific challenges, with three Accelerate Research fellows working directly on issues relating to computational biology, psychiatry, and string theory. While we’re concentrating on STEM subjects for now, in the longer term our ambition is to build links with the social sciences and humanities.

Progress so far includes:

Recruited a core research team working on the application of AI in mental health, bioinformatics, healthcare, string theory, and complex systems.

Created a research agenda and roadmap for the development of AI in science.

Funded interdisciplinary projects, e.g. in first round:

Antimicrobial resistance in farming

Quantifying Design Trade-offs in Electricity-generation-focused Tokamaks using AI

Automated preclinical drug discovery in vivo using pose estimation

Causal Methods for Environmental Science Workshop

Automatic tree mapping in Cambridge

Acoustic monitoring for biodiversity conservation

AI, mathematics and string theory

Theoretical, Scientific, and Philosophical Perspectives on Biological Understanding in the age of Artificial Intelligence

AI in pathology: optimising a classifier for digital images of duodenal biopsies

Teaching and learning: building on the teaching activities already delivered through University courses, we’re creating a pipeline of learning opportunities to help PhD students and postdocs better understand how to use data science and machine learning in their work.

Progress so far includes:

Teaching and learning

Brought over 250 participants from over 30 departments through tailored data science and machine learning for science training (Data Science Residency and Machine Learning Academy);

Convened workshops with over 80 researchers across the University on the development of data pipelines for science;

Delivered University courses to over 100 students in Advanced Data Science and Machine Learning and the Physical World.

Online training course in Python and Pandas accessed by over 380 researchers.

Engagement: we hope that Accelerate will help build a community of researchers working across the University at the interface on machine learning and the sciences, helping to share best practice and new methods, and support each other in advancing their research. Over the coming years, we’ll be running a variety of events and activities in support of this.

Progress so far includes:

- Launched a Machine Learning Engineering Clinic that has supported over 40 projects across the University with MLE troubleshooting and advice;

- Hosted and participated in events reaching over 300 people in Cambridge;

- Led international workshops at Dagstuhl and Oberwolfach, convening over 60 leading researchers;

- Engaged over 70 researchers through outreach sessions and workshops with the School of Clinical Medicine, the Faculty of Education, Cambridge Digital Humanities and the School of Biological Sciences.

3. Stakeholder Engagement and Feedback Mechanisms

Challenge: AI development often proceeds without adequate incorporation of public perspectives and concerns.

Our public dialogue work, conducted in collaboration with the Kavli Centre for Ethics, Science, and the Public, creates structured spaces for public dialogue about AI’s potential benefits and risks. The approach ensures that diverse voices and perspectives are heard and considered in AI development.

Challenge: AI initiatives often fail to align with diverse academic needs across institutions.

Cross-University Workshops serve as vital platforms for alignment, bringing together faculty and staff from different departments to discuss AI teaching and learning strategies. By engaging professional services staff, the initiative ensures that capability building extends beyond academic departments to support staff who play key roles in implementing and maintaining AI systems.

4. Flexible and Adaptable Approaches

Challenge: Traditional rigid, top-down research agendas often fail to address real needs effectively.

The AI-deas Challenge Development program empowers researchers to identify and propose challenge areas based on their expertise and understanding of field needs. Through collaborative workshops, these initial ideas are refined and developed, ensuring that research directions emerge organically from the academic community while maintaining alignment with broader strategic goals.

5. Phased Implementation and Realistic Planning

Challenge: Ambitious AI initiatives often fail due to unrealistic implementation timelines and expectations.

The overall strategy emphasizes careful, phased deployment to ensure sustainable success. Beginning with pilot programs like AI-deas and the Policy Lab, the approach allows for testing and refinement of methods before broader implementation. This measured approach enables the incorporation of lessons learned from early phases into subsequent expansions.

6. Independent Oversight and Diverse Perspectives

Challenge: AI initiatives often lack balanced guidance and oversight from diverse perspectives.

The Steering Group provides crucial oversight through representatives from various academic disciplines and professional services. Working with a cross-institutional team, it ensures balanced decision-making that considers multiple perspectives. The group maintains close connections with external initiatives like ELLIS, ICAIN, and Data Science Africa, enabling the university to benefit from and contribute to broader AI developments.

7. Addressing the Innovation Supply Chain

Challenge: Academic innovations often struggle to connect with and address industry needs effectively.

The Industry Engagement initiative develops meaningful industrial partnerships through collaboration with the Strategic Partnerships Office, helping translate research into real-world solutions. The planned sciencepreneurship initiative aims to create a structured pathway from academic research to entrepreneurial ventures, helping ensure that innovations can effectively reach and benefit society.

Innovation Economy Conclusion

ai@cam’s approach aims to address the macro-micro disconnects in AI innovation through several key strategies. We are building bridges between macro and micro levels, fostering interdisciplinary collaboration, engaging diverse stakeholders and voices, and providing crucial resources and training. Through these efforts, ai@cam is working to create a more integrated and effective AI innovation ecosystem.

Our implementation approach emphasizes several critical elements learned from past IT implementation failures. We focus on flexibility to adapt to changing needs, phased rollout of initiatives to manage risk, establishing continuous feedback loops for improvement, and maintaining a learning mindset throughout the process.

Looking to the future, we recognize that AI technologies and their applications will continue to evolve rapidly. This evolution requires strategic agility and a continued focus on effective implementation. We will need to remain adaptable, continuously assessing and adjusting our strategies while working to bridge capability gaps between high-level AI capabilities and on-the-ground implementation challenges.

Thanks!

For more information on these subjects and more you might want to check the following resources.

- company: Trent AI

- book: The Atomic Human

- twitter: @lawrennd

- podcast: The Talking Machines

- newspaper: Guardian Profile Page

- blog: http://inverseprobability.com