Jaynes’ World

Abstract

The relationship between physical systems and intelligence has long fascinated researchers in computer science and physics. This talk explores fundamental connections between thermodynamic systems and intelligent decision-making through the lens of free energy principles.

We examine how concepts from statistical mechanics - particularly the relationship between total energy, free energy, and entropy - might provide novel insights into the nature of intelligence and learning. By drawing parallels between physical systems and information processing, we consider how measurement and observation can be viewed as processes that modify available energy. The discussion encompasses how model approximations and uncertainties might be understood through thermodynamic analogies, and explores the implications of treating intelligence as an energy-efficient state-change process.

While these connections remain speculative, they offer a potential shared language for discussing the emergence of natural laws and societal systems through the lens of information.

Hydrodynamica

When Laplace spoke of the curve of a simple molecule of air, he may well have been thinking of Daniel Bernoulli (1700-1782). Daniel Bernoulli was one name in a prodigious family. His father and brother were both mathematicians. Daniel’s main work was known as Hydrodynamica.

Figure: Daniel Bernoulli’s Hydrodynamica published in 1738. It was one of the first works to use the idea of conservation of energy. It used Newton’s laws to predict the behaviour of gases.

Daniel Bernoulli described a kinetic theory of gases, but it wasn’t until 170 years later when these ideas were verified after Einstein had proposed a model of Brownian motion which was experimentally verified by Jean Baptiste Perrin.

Figure: Daniel Bernoulli’s chapter on the kinetic theory of gases, for a review on the context of this chapter see Mikhailov (n.d.). For 1738 this is extraordinary thinking. The notion of kinetic theory of gases wouldn’t become fully accepted in Physics until 1908 when a model of Einstein’s was verified by Jean Baptiste Perrin.

Entropy Billiards

<canvas id="multiball-canvas" width="700" height="500" style="border:1px solid black;display:block;width:100%"></canvas><div>Velocity-bin entropy: <output id="multiball-entropy"></output></div>

<div id="multiball-histogram-canvas" style="width:100%;height:250px"></div>

Figure: Bernoulli’s simple kinetic models of gases assume that the molecules of air operate like billiard balls. The displayed entropy is the Shannon entropy of the observed velocity histogram (a coarse-grained proxy, not full thermodynamic entropy).

import numpy as npp = np.random.randn(10000, 1)

xlim = [-4, 4]

x = np.linspace(xlim[0], xlim[1], 200)

y = 1/np.sqrt(2*np.pi)*np.exp(-0.5*x*x)Another important figure for Cambridge was the first to derive the probability distribution that results from small balls banging together in this manner. In doing so, James Clerk Maxwell founded the field of statistical physics.

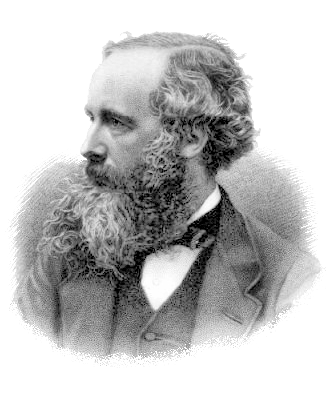

Figure: James Clerk Maxwell 1831-1879 Derived distribution of velocities of particles in an ideal gas (elastic fluid).

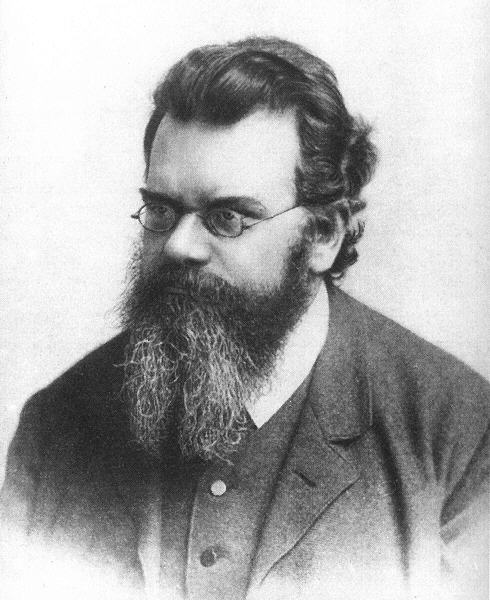

Figure: James Clerk Maxwell (1831-1879), Ludwig Boltzmann (1844-1906) Josiah Willard Gibbs (1839-1903)

Many of the ideas of early statistical physicists were rejected by a cadre of physicists who didn’t believe in the notion of a molecule. The stress of trying to have his ideas established caused Boltzmann to commit suicide in 1906, only two years before the same ideas became widely accepted.

Figure: Boltzmann’s paper Boltzmann (n.d.) which introduced the relationship between entropy and probability. A translation with notes is available in Sharp and Matschinsky (2015).

The important point about the uncertainty being represented here is that it is not genuine stochasticity, it is a lack of knowledge about the system. The techniques proposed by Maxwell, Boltzmann and Gibbs allow us to exactly represent the state of the system through a set of parameters that represent the sufficient statistics of the physical system. We know these values as the volume, temperature, and pressure. The challenge for us, when approximating the physical world with the techniques we will use is that we will have to sit somewhere between the deterministic and purely stochastic worlds that these different scientists described.

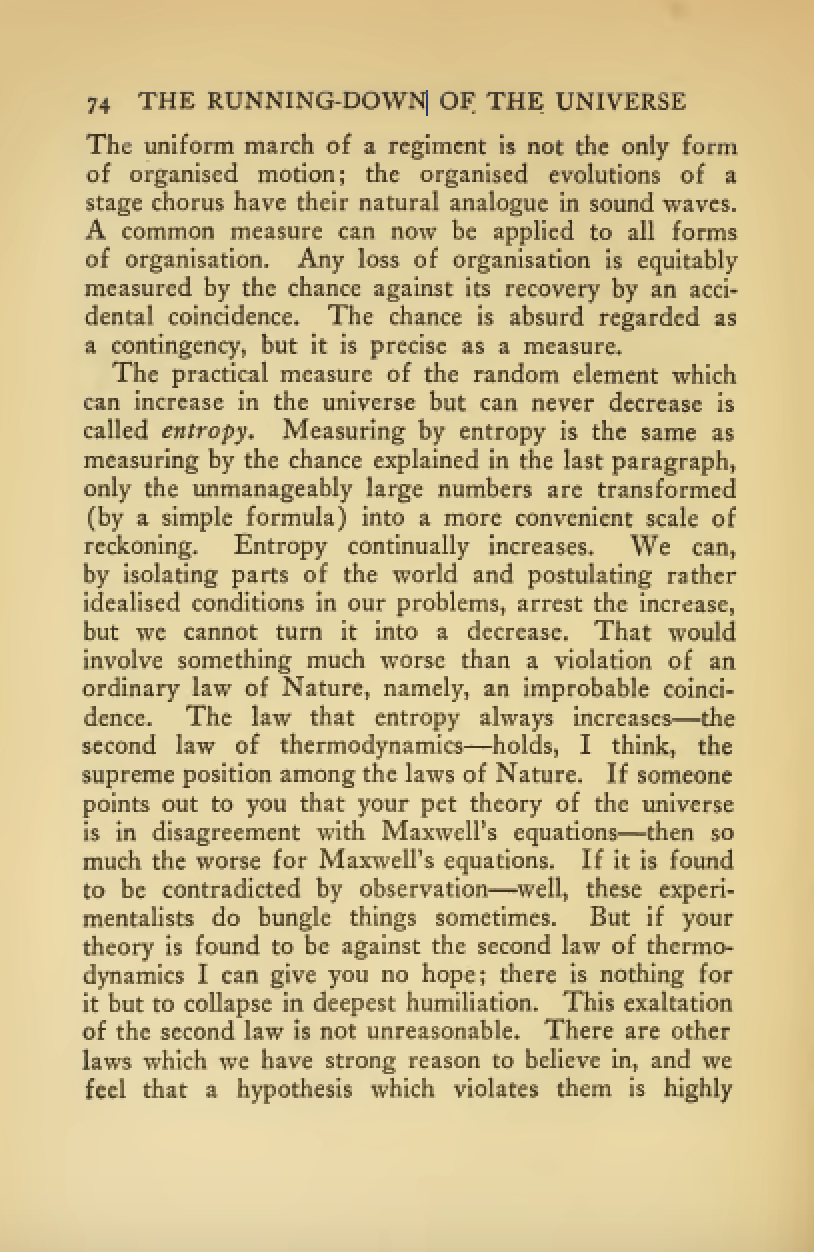

One ongoing characteristic of people who study probability and uncertainty is the confidence with which they hold opinions about it. Another leader of the Cavendish laboratory expressed his support of the second law of thermodynamics (which can be proven through the work of Gibbs/Boltzmann) with an emphatic statement at the beginning of his book.

Figure: Eddington’s book on the Nature of the Physical World (Eddington, 1929)

The same Eddington is also famous for dismissing the ideas of a young Chandrasekhar who had come to Cambridge to study in the Cavendish lab. Chandrasekhar demonstrated the limit at which a star would collapse under its own weight to a singularity, but when he presented the work to Eddington, he was dismissive suggesting that there “must be some natural law that prevents this abomination from happening.”

Figure: Chandrasekhar (1910-1995) derived the limit at which a star collapses in on itself. Eddington’s confidence in the 2nd law may have been what drove him to dismiss Chandrasekhar’s ideas, humiliating a young scientist who would later receive a Nobel prize for the work.

Figure: Eddington makes his feelings about the primacy of the second law clear. This primacy is perhaps because the second law can be demonstrated mathematically, building on the work of Maxwell, Gibbs and Boltzmann. Eddington (1929)

Presumably he meant that the creation of a black hole seemed to transgress the second law of thermodynamics, although later Hawking was able to show that blackholes do evaporate, but the time scales at which this evaporation occurs is many orders of magnitude slower than other processes in the universe.

Maxwell’s Demon

Maxwell’s demon is a thought experiment described by James Clerk Maxwell in his book, Theory of Heat (Maxwell, 1871) on page 308.

But if we conceive a being whose faculties are so sharpened that he can follow every molecule in its course, such a being, whose attributes are still as essentially finite as our own, would be able to do what is at present impossible to us. For we have seen that the molecules in a vessel full of air at uniform temperature are moving with velocities by no means uniform, though the mean velocity of any great number of them, arbitrarily selected, is almost exactly uniform. Now let us suppose that such a vessel is divided into two portions, A and B, by a division in which there is a small hole, and that a being, who can see the individual molecules, opens and closes this hole, so as to allow only the swifter molecules to pass from A to B, and the only the slower ones to pass from B to A. He will thus, without expenditure of work, raise the temperature of B and lower that of A, in contradiction to the second law of thermodynamics.

James Clerk Maxwell in Theory of Heat (Maxwell, 1871) page 308

He goes onto say:

This is only one of the instances in which conclusions which we have draw from our experience of bodies consisting of an immense number of molecules may be found not to be applicable to the more delicate observations and experiments which we may suppose made by one who can perceive and handle the individual molecules which we deal with only in large masses

Figure: Maxwell’s demon was designed to highlight the statistical nature of the second law of thermodynamics.

<canvas id="maxwell-canvas" width="700" height="500" style="border:1px solid black;display:block;width:100%"></canvas><div>Velocity-bin entropy: <output id="maxwell-entropy"></output></div>

<div id="maxwell-histogram-canvas" style="width:100%;height:250px"></div>

Figure: Maxwell’s Demon. The demon decides balls are either cold (blue) or hot (red) according to their velocity. Balls are allowed to pass the green membrane from right to left only if they are cold, and from left to right only if they are hot. The displayed entropy is the Shannon entropy of the velocity histogram (a coarse-grained proxy, not full thermodynamic entropy).

Maxwell’s demon allows us to connect thermodynamics with information theory (see e.g. Hosoya et al. (2015);Hosoya et al. (2011);Bub (2001);Brillouin (1951);Szilard (1929)). The connection arises due to a fundamental connection between information erasure and energy consumption Landauer (1961).

Alemi and Fischer (2019)

Information Theory and Thermodynamics

Information theory provides a mathematical framework for quantifying information. Many of information theory’s core concepts parallel those found in thermodynamics. The theory was developed by Claude Shannon who spoke extensively to MIT’s Norbert Wiener at while it was in development (Conway and Siegelman, 2005). Wiener’s own ideas about information were inspired by Willard Gibbs, one of the pioneers of the mathematical understanding of free energy and entropy. Deep connections between physical systems and information processing have connected information and energy from the start.

Entropy

Shannon’s entropy measures the uncertainty or unpredictability of information content. This mathematical formulation is inspired by thermodynamic entropy, which describes the dispersal of energy in physical systems. Both concepts quantify the number of possible states and their probabilities.

Figure: Maxwell’s demon thought experiment illustrates the relationship between information and thermodynamics.

In thermodynamics, free energy represents the energy available to do work. A system naturally evolves to minimize its free energy, finding equilibrium between total energy and entropy. Free energy principles are also pervasive in variational methods in machine learning. They emerge from Bayesian approaches to learning and have been heavily promoted by e.g. Karl Friston as a model for the brain.

The relationship between entropy and Free Energy can be explored through the Legendre transform. This is most easily reviewed if we restrict ourselves to distributions in the exponential family.

Exponential Family

The exponential family has the form \[ \rho(Z) = h(Z) \exp\left(\boldsymbol{\theta}^\top T(Z) + A(\boldsymbol{\theta})\right) \] where \(h(Z)\) is the base measure, \(\boldsymbol{\theta}\) is the natural parameters, \(T(Z)\) is the sufficient statistics and \(A(\boldsymbol{\theta})\) is the log partition function. Its entropy can be computed as \[ S(Z) = A(\boldsymbol{\theta}) - \boldsymbol{\theta}^\top \nabla_\boldsymbol{\theta}A(\boldsymbol{\theta}) - E_{\rho(Z)}\left[\log h(Z)\right], \] where \(E_{\rho(Z)}[\cdot]\) is the expectation under the distribution \(\rho(Z)\).

Available Energy

Work through Measurement

In machine learning and Bayesian inference, the Markov blanket is the set of variables that are conditionally independent of the variable of interest given the other variables. To introduce this idea into our information system, we first split the system into two parts, the variables, \(X\), and the memory \(M\).

The variables are the portion of the system that is stochastically evolving over time. The memory is a low entropy partition of the system that will give us knowledge about this evolution.

We can now write the joint entropy of the system in terms of the mutual information between the variables and the memory. \[ S(Z) = S(X,M) = S(X|M) + S(M) = S(X) - I(X;M) + S(M). \] This gives us the first hint at the connection between information and energy.

If \(M\) is viewed as a measurement then the change in entropy of the system before and after measurement is given by \(S(X|M) - S(X)\) wehich is given by \(-I(X;M)\). This is implies that measurement increases the amount of available energy we obtain from the system (Parrondo et al., 2015).

The difference in available energy is given by \[ \Delta A = A(X) - A(Z|M) = I(X;M), \] where we note that the resulting system is no longer in thermodynamic equilibrium due to the low entropy of the memory.

The Animal Game

The Entropy Game is a framework for understanding efficient uncertainty reduction. To start think of finding the optimal strategy for identifying an unknown entity by asking the minimum number of yes/no questions.

The 20 Questions Paradigm

In the game of 20 Questions player one (Alice) thinks of an object, player two (Bob) must identify it by asking at most 20 yes/no questions. The optimal strategy is to divide the possibility space in half with each question. The binary search approach ensures maximum information gain with each inquiry and can access \(2^20\) or about a million different objects.

Figure: The optimal strategy in the Entropy Game resembles a binary search, dividing the search space in half with each question.

Entropy Reduction and Decisions

From an information-theoretic perspective, decisions can be taken in a way that efficiently reduces entropy - our the uncertainty about the state of the world. Each observation or action an intelligent agent takes should maximize expected information gain, optimally reducing uncertainty given available resources.

The entropy before the question is \(S(X)\). The entropy after the question is \(S(X|M)\). The information gain is the difference between the two, \(I(X;M) = S(X) - S(X|M)\). Optimal decision making systems maximize this information gain per unit cost.

Thermodynamic Parallels

The entropy game connects decision-making to thermodynamics.

This perspective suggests a profound connection: intelligence might be understood as a special case of systems that efficiently extract, process, and utilize free energy from their environments, with thermodynamic principles setting fundamental constraints on what’s possible.

Information Engines: Intelligence as an Energy-Efficiency

The entropy game shows some parallels between thermodynamics and measurement. This allows us to imagine information engines, simple systems that convert information to energy. This is our first simple model of intelligence.

Measurement as a Thermodynamic Process: Information-Modified Second Law

The second law of thermodynamics was generalised to include the effect of measurement by Sagawa and Ueda (Sagawa and Ueda, 2008). They showed that the maximum extractable work from a system can be increased by \(k_BTI(X;M)\) where \(k_B\) is Boltzmann’s constant, \(T\) is temperature and \(I(X;M)\) is the information gained by making a measurement, \(M\), \[ I(X;M) = \sum_{x,m} \rho(x,m) \log \frac{\rho(x,m)}{\rho(x)\rho(m)}, \] where \(\rho(x,m)\) is the joint probability of the system and measurement (see e.g. eq 14 in Sagawa and Ueda (2008)). This can be written as \[ W_\text{ext} \leq - \Delta\mathcal{F} + k_BTI(X;M), \] where \(W_\text{ext}\) is the extractable work and it is upper bounded by the negative change in free energy, \(\Delta \mathcal{F}\), plus the energy gained from measurement, \(k_BTI(X;M)\). This is the information-modified second law.

The measurements can be seen as a thermodynamic process. In theory measurement, like computation is reversible. But in practice the process of measurement is likely to erode the free energy somewhat, but as long as the energy gained from information, \(kTI(X;M)\) is greater than that spent in measurement the pricess can be thermodynamically efficient.

The modified second law shows that the maximum additional extractable work is proportional to the information gained. So information acquisition creates extractable work potential. Thermodynamic consistency is maintained by properly accounting for information-entropy relationships.

Efficacy of Feedback Control

Sagawa and Ueda extended this relationship to provide a generalised Jarzynski equality for feedback processes (Sagawa and Ueda, 2010). The Jarzynski equality is an imporant result from nonequilibrium thermodynamics that relates the average work done across an ensemble to the free energy difference between initial and final states (Jarzynski, 1997), \[ \left\langle \exp\left(-\frac{W}{k_B T}\right) \right\rangle = \exp\left(-\frac{\Delta\mathcal{F}}{k_BT}\right), \] where \(\langle W \rangle\) is the average work done across an ensemble of trajectories, \(\Delta\mathcal{F}\) is the change in free energy, \(k_B\) is Boltzmann’s constant, and \(\Delta S\) is the change in entropy. Sagawa and Ueda extended this equality to to include information gain from measurement (Sagawa and Ueda, 2010), \[ \left\langle \exp\left(-\frac{W}{k_B T}\right) \exp\left(\frac{\Delta\mathcal{F}}{k_BT}\right) \exp\left(-\mathcal{I}(X;M)\right)\right\rangle = 1, \] where \(\mathcal{I}(X;M) = \log \frac{\rho(X|M)}{\rho(X)}\) is the information gain from measurement, and the mutual information is recovered \(I(X;M) = \left\langle \mathcal{I}(X;M) \right\rangle\) as the average information gain.

Sagawa and Ueda introduce an efficacy term that captures the effect of feedback on the system they note in the presence of feedback, \[ \left\langle \exp\left(-\frac{W}{k_B T}\right) \exp\left(\frac{\Delta\mathcal{F}}{k_BT}\right)\right\rangle = \gamma, \] where \(\gamma\) is the efficacy.

Channel Coding Perspective on Memory

When viewing \(M\) as an information channel between past and future states, Shannon’s channel coding theorems apply (Shannon, 1948). The channel capacity \(C\) represents the maximum rate of reliable information transmission [ C = _{(M)} I(X_1;M) ] and for a memory of \(n\) bits we have [ C n, ] as the mutual information is upper bounded by the entropy of \(\rho(M)\) which is at most \(n\) bits.

This relationship seems to align with Ashby’s Law of Requisite Variety (pg 229 Ashby (1952)), which states that a control system must have at least as much ‘variety’ as the system it aims to control. In the context of memory systems, this means that to maintain temporal correlations effectively, the memory’s state space must be at least as large as the information content it needs to preserve. This provides a lower bound on the necessary memory capacity that complements the bound we get from Shannon for channel capacity.

This helps determine the required memory size for maintaining temporal correlations, optimal coding strategies, and fundamental limits on temporal correlation preservation.

Decomposition into Past and Future

Model Approximations and Thermodynamic Efficiency

Intelligent systems must balance measurement against energy efficiency and time requirements. A perfect model of the world would require infinite computational resources and speed, so approximations are necessary. This leads to uncertainties. Thermodynamics might be thought of as the physics of uncertainty: at equilibrium thermodynamic systems find thermodynamic states that minimize free energy, equivalent to maximising entropy.

Markov Blanket

To introduce some structure to the model assumption. We split \(X\) into \(X_0\) and \(X_1\). \(X_0\) is past and present of the system, \(X_1\) is future The conditional mutual information \(I(X_0;X_1|M)\) which is zero if \(X_1\) and \(X_0\) are independent conditioned on \(M\).

At What Scales Does this Apply?

The equipartition theorem tells us that at equilibrium the average energy is \(kT/2\) per degree of freedom. This means that for systems that operate at “human scale” the energy involved is many orders of magnitude larger than the amount of information we can store in memory. For a car engine producing 70 kW of power at 370 Kelvin, this implies \[ \frac{2 \times 70,000}{370 \times k_B} = \frac{2 \times 70,000}{370\times 1.380649×10^{−23}} = 2.74 × 10^{25} \] degrees of freedom per second. If we make a conservative assumption of one bit per degree of freedom, then the mutual information we would require in one second for comparative energy production would be around 3400 zettabytes, implying a memory bandwidth of around 3,400 zettabytes per second. In 2025 the estimate of all the data in the world stands at 149 zettabytes.

Small-Scale Biochemical Systems and Information Processing

While macroscopic systems operate in regimes where traditional thermodynamics dominates, microscopic biological systems operate at scales where information and thermal fluctuations become critically important. Here we examine how the framework applies to molecular machines and processes that have evolved to operate efficiently at these scales.

Molecular machines like ATP synthase, kinesin motors, and the photosynthetic apparatus can be viewed as sophisticated information engines that convert energy while processing information about their environment. These systems have evolved to exploit thermal fluctuations rather than fight against them, using information processing to extract useful work.

ATP Synthase: Nature’s Rotary Engine

ATP synthase functions as a rotary molecular motor that synthesizes ATP from ADP and inorganic phosphate using a proton gradient. The system uses the proton gradient as both an energy source and an information source about the cell’s energetic state and exploits Brownian motion through a ratchet mechanism. It converts information about proton locations into mechanical rotation and ultimately chemical energy with approximately 3-4 protons required per ATP.

Estimates suggest that one synapse firing may require \(10^4\) ATP molecules, so around \(4 \times 10^4\) protons. If we take the human brain as containing around \(10^{14}\) synapses, and if we suggest each synapse only fires about once every five seconds, we would require approximately \(10^{18}\) protons per second to power the synapses in our brain. With each proton having six degrees of freedom. Under these rough calculations the memory capacity distributed across the ATP Synthase in our brain must be of order \(6 \times 10^{18}\) bits per second or 750 petabytes of information per second. Of course this memory capacity would be devolved across the billions of neurons within hundreds or thousands of mitochondria that each can contain thousands of ATP synthase molecules. By composition of extremely small systems we can see it’s possible to improve efficiencies in ways that seem very impractical for a car engine.

Quick note to clarify, here we’re referring to the information requirements to make our brain more energy efficient in its information processing rather than the information processing capabilities of the neurons themselves!

Jaynes’s Maximum Entropy Principle

In his seminal 1957 paper (Jaynes, 1957), Ed Jaynes proposed a foundation for statistical mechanics based on information theory. Rather than relying on ergodic hypotheses or ensemble interpretations, Jaynes recast that the problem of assigning probabilities in statistical as a problem of inference with incomplete information.

A central problem in statistical mechanics is assigning initial probabilities when our knowledge is incomplete. For example, if we know only the average energy of a system, what probability distribution should we use? Jaynes argued that we should use the distribution that maximizes entropy subject to the constraints of our knowledge.

Jaynes illustrated the approachwith a simple example: Suppose a die has been tossed many times, with an average result of 4.5 rather than the expected 3.5 for a fair die. What probability assignment \(P_n\) (\(n=1,2,...,6\)) should we make for the next toss?

We need to satisfy two constraints \[\begin{align} \sum_{n=1}^6 P_n &= 1 \\ \sum_{n=1}^6 nP_n &= 4.5 \end{align}\]

Many distributions could satisfy these constraints, but which one makes the fewest unwarranted assumptions? Jaynes argued that we should choose the distribution that is maximally noncommittal with respect to missing information - the one that maximizes the entropy, \[\begin{align} S_I = -\sum_{i} p_i \log p_i \end{align}\] This principle leads to the exponential family of distributions, which in statistical mechanics gives us the canonical ensemble and other familiar distributions.

The General Maximum-Entropy Formalism

For a more general case, suppose a quantity \(x\) can take values \((x_1, x_2, \ldots, x_n)\) and we know the average values of several functions \(f_k(x)\). The problem is to find the probability assignment \(p_i = p(x_i)\) that satisfies \[\begin{align} \sum_{i=1}^n p_i &= 1 \\ \sum_{i=1}^n p_i f_k(x_i) &= \langle f_k(x) \rangle = F_k \quad k=1,2,\ldots,m \end{align}\] and maximizes the entropy \(S_I = -\sum_{i=1}^n p_i \log p_i\).

Using Lagrange multipliers, the solution is the generalized canonical distribution, \[\begin{align} p_i = \frac{1}{Z(\lambda_1,\ldots,\lambda_m)}\exp(-\lambda_1 f_1(x_i) - \ldots - \lambda_m f_m(x_i)) \end{align}\] where \(Z(\lambda_1,\ldots,\lambda_m)\) is the partition function, \[\begin{align} Z(\lambda_1,\ldots,\lambda_m) = \sum_{i=1}^n \exp(-\lambda_1 f_1(x_i) - \ldots - \lambda_m f_m(x_i)) \end{align}\] The Lagrange multipliers \(\lambda_k\) are determined by the constraints, \[\begin{align} \langle f_k \rangle = -\frac{\partial}{\partial \lambda_k}\log Z(\lambda_1,\ldots,\lambda_m) \quad k=1,2,\ldots,m. \end{align}\] The maximum attainable entropy is \[\begin{align} (S_I)_{max} = \log Z + \sum_{k=1}^m \lambda_k \langle f_k \rangle. \end{align}\]

Jaynes’ World

Jaynes’ World is a zero-player game that implements a version of the entropy game. The dynamical system is defined by a distribution, \(\rho(Z)\), over a state space \(Z\). The state space is partitioned into observable variables \(X\) and memory variables \(M\). The memory variables are considered to be in an information resevoir, a thermodynamic system that maintains information in an ordered state (see e.g. Barato and Seifert (2014)). The entropy of the whole system is bounded below by 0 and above by \(N\). So the entropy forms a compact manifold with respect to its parameters.

Unlike the animal game, where decisions are made by reducing entropy at each step, our system evovles mathematically by maximising the instantaneous entropy production. Conceptually we can think of this as ascending the gradient of the entropy, \(S(Z)\).

In the animal game the questioner starts with maximum uncertainty and targets minimal uncertainty. Jaynes’ world starts with minimal uncertainty and aims for maximum uncertainty.

We can phrase this as a thought experiment. Imagine you are in the game, at a given turn. You want to see where the game came from, so you look back across turns. The direction the game came from is now the direction of steepest descent. Regardless of where the game actually started it looks like it started at a minimal entropy configuration that we call the origin. Similarly, wherever the game is actually stopped there will nevertheless appear to be an end point we call end that will be a configuration of maximal entropy, \(N\).

This speculation allows us to impose the functional form of our proability distribution. As Jaynes has shown (Jaynes, 1957), the stationary points of a free-form optimisation (minimum or maximum) will place the distribution in the, \(\rho(Z)\) in the exponential family, \[ \rho(Z) = h(Z) \exp(\boldsymbol{\theta}^\top T(Z) - A(\boldsymbol{\theta})), \] where \(h(Z)\) is the base measure, \(T(Z)\) are sufficient statistics, \(A(\boldsymbol{\theta})\) is the log-partition function, \(\boldsymbol{\theta}\) are the natural parameters of the distribution.}

This constraint to the exponential family is highly convenient as we will rely on it heavily for the dynamics of the game. In particular, by focussing on the natural parameters we find that we are optimising within an information geometry (Amari, 2016). In exponential family distributions, the entropy gradient is given by, \[ \nabla_{\boldsymbol{\theta}}S(Z) = \mathbf{g} = \nabla^2_\boldsymbol{\theta} A(\boldsymbol{\theta}(M)) \] And the Fisher information matrix, \(G(\boldsymbol{\theta})\), is also the Hessian of the manifold, \[ G(\boldsymbol{\theta}) = \nabla^2_{\boldsymbol{\theta}} A(\boldsymbol{\theta}) = \text{Cov}[T(Z)]. \] Traditionally, when optimising on an information geometry we take natural gradient steps, equivalen to a Newton minimisation step, \[ \Delta \boldsymbol{\theta} = - G(\boldsymbol{\theta})^{-1} \mathbf{g}, \] but this is not the direction that gives the instantaneious maximisation of the entropy production, instead our gradient step is given by \[ \Delta \boldsymbol{\theta} = \eta \mathbf{g}, \] where \(\eta\) is a ‘learning rate.’

System Evolution

We are now in a position to summarise the start state and the end state of our system, as well as to speculate on the nature of the transition between the two states.

Start State

The origin configuration is a low entropy state, with value near the lower bound of 0. The information is highly structured, by definition we place all variables in \(M\), the information resevoir at this time. The uncertainty principle is present to handle the competeing needs of precision in parameters (giving us the near-singular form for \(\boldsymbol{\theta}(M)\), and capacity in the information channel that \(M\) provides (the capacity \(c(\boldsymbol{\theta})\) is upper bounded by \(S(M)\).

End State

The end configuration is a high entropy state, near the upper bound. Both the minimal entropy and maximal entropy states are revealed by Ed Jaynes’ variational minimisation approach and are in the exponential family. In many cases a version of Zeno’s paradox will arise where the system asymtotes to the final state, taking smaller steps at each time. At this point the system is at equilibrium.

Jaynes’ World

This framework explores how structure, time, causality, and locality might emerge from representation through a configuration and our uncertainty about configuration. The configuration—how variables actually relate to each other—is ontologically primary. All mathematical structures (parameters, distributions, entropy measures) emerge as consequences of tracking our uncertainty about which configuration is actual.

The system serves as: - A research framework for observer-free dynamics and entropy-based emergence - A conceptual tool for exploring information topography: landscapes where uncertainty about configuration evolves under constraints

Fundamental Structure: Configuration and Uncertainty

Configuration as Primary Reality

The configuration represents the state of structural relationships between system components. This is the reality that exists independently of our methods for observing or describing it. The mathematical representations–parameters, operators, entropy measures–are epistemic tools that emerge from our attempts to track configuration changes.

Uncertainty Distribution Over Configurations

A density matrix \(\rho\) represents our uncertainty about which configuration is actual. The density matrix is defined over the space of possible configurations, it not a description of any particular configuration. The von Neumann entropy \(S[\rho] = -\mathrm{tr}(\rho \log \rho)\) measures the amount of uncertainty in our knowledge—–equivalently, the amount of disorder in the space of possible configurations.

Emergence of Mathematical Structure

Exponential Family as Inevitable Consequence

The exponential family-style representation for the density matrix is not a choice but a consequence of seeking the minimum uncertainty about configuration subject to resolution constraints. Minimising this uncertainty (maximising entropy) while preserving certain constraint information, the method of Lagrange multipliers automatically produces the form, \[ \rho(\boldsymbol{\theta}) = \frac{1}{Z(\boldsymbol{\theta})} \exp\left( \sum_i \theta_i H_i \right), \] the natural parameters \(\boldsymbol{\theta}\) emerge as the Lagrange multipliers needed to enforce our constraints on uncertainty.

System Structure Through Uncertainty Dynamics

Let \(Z = \{Z_1, Z_2, \dots, Z_n\}\) be variables describing possible configurations. At any point in the system’s evolution, our ability to resolve uncertainty partitions these into \(X(\tau) \subseteq Z\) that are active variables where uncertainty gradients exceed resolution threshold \(\varepsilon\) and \(M(\tau) = Z \setminus X(\tau)\), latent variables forming an information reservoir where uncertainty changes remain below threshold (Barato and Seifert (2014),Parrondo et al. (2015)).

Derived Mathematical Objects

The mathematical structures follow from the uncertainty framework, the log-partition function (which is a cumulant generating function) has the form, \[ A(\boldsymbol{\theta}) = \log Z(\boldsymbol{\theta}) \] and the von Neumann entropy measures uncertainty about the configuration:, \[ S(\boldsymbol{\theta}) = A(\boldsymbol{\theta}) - \boldsymbol{\theta}^\top \nabla A(\boldsymbol{\theta}) \] with the Fisher Information Matrix capturing the uncertainty geometry, \[ G_{ij}(\boldsymbol{\theta}) = \frac{\partial^2 A}{\partial \theta_i \partial \theta_j} \] These choices arise from our uncertainty measures about configuration possibilities.

Resolution Constraints and Discrete Structure

We limit the total number of configurations by bounding the system capacity (\(N\) bits maximum). This in turn implies a minimum detectable resolution \(\varepsilon\) in the uncertainty space.

Uncertainty-Driven Dynamics

Core Principle: Uncertainty Resolution

Variable Activation Through Uncertainty Thresholds

Variables become active when uncertainty gradients about their associated configuration aspects exceed the resolution threshold, \[ X(\tau) = \left\{ i \mid \left| \frac{\text{d}\theta_i}{\text{d}\tau} \right| \geq \varepsilon \right\}, \quad M(\tau) = Z \setminus X(\tau). \] This activation represents the point where uncertainty about particular configuration aspects becomes resolvable and can drive further uncertainty resolution.

Information Geometry of Uncertainty Evolution

The Fisher Information Matrix partitions according to uncertainty resolvability, \[ G(\boldsymbol{\theta}) = \begin{bmatrix} G_{XX} & G_{XM} \\ G_{MX} & G_{MM} \end{bmatrix}, \] where \(G_{XX}\) describes the geometry of resolvable uncertainty, \(G_{MM}\) the structure of the latent uncertainty reservoir, and \(G_{XM}\) the coupling between resolved and unresolved aspects. This partitioning governs how uncertainty resolution propagates through the configuration space.

Lemma 1: Form of the Minimal Entropy Configuration

The minimal-entropy state that is compatible with the system’s resolution constraint and regularity condition is represented by a density matrix of the exponential form, \[ \rho(\boldsymbol{\theta}_o) = \frac{1}{Z(\boldsymbol{\theta}_o)} \exp\left( \sum_i \theta_{oi} H_i \right), \] where all components \(\theta_{oi}\) are sub-threshold \[ |\dot{\theta}_{oi}| < \varepsilon. \] This state minimises entropy under the constraint that it remains regular, continuous, and detectable only above a resolution scale $$. Its structure can be derived via a minimum-entropy analogue of Jaynes’ maximum entropy formalism (Jaynes, 1963), using the same density matrix geometry but inverted optimization.

Lemma 2: Symmetry Breaking

If \(\theta_k \in M(\tau)\) and \(|\dot{\theta}_k| \geq \varepsilon\), then \[ \theta_k \in X(\tau + \delta \tau). \]

Perceived Time

The system evolves in two time scales:

System time \(\tau\): the external time parameter in which the system evolves according to \[ t(\tau) := S_{X(\tau)}(\tau) \]

Perceived time \(t\): the internal time that measures the accumulated entropy of active variables, defined as \[ t(\tau) := S_{X(\tau)}(\tau) \]

The relationship between these time scales is given by \[ \frac{\text{d}t}{\text{d}\tau} = \boldsymbol{\theta}^\top G(\boldsymbol{\theta}) \boldsymbol{\theta} = -\boldsymbol{\theta}^\top \nabla_\boldsymbol{\theta} S[\rho_\boldsymbol{\theta}] \]

This reveals that perceived time flows at different rates depending on the information content of the system. In regions where parameters are strongly aligned with entropy change, perceived time flows rapidly relative to system time. In regions where parameters are weakly coupled to entropy change, perceived time flows slowly.

Lemma 3: Monotonicity of Perceived Time

\[ t(\tau_2) \geq t(\tau_1) \quad \text{for all } \tau_2 > \tau_1 \]

Corollary: Irreversibility

\(t(\tau)\) increases monotonically, preventing time-reversal globally.

In regions where parameters are weakly coupled to entropy change (low \(\boldsymbol{\theta}^\top \nabla_\boldsymbol{\theta} S[\rho_\boldsymbol{\theta}]\)), perceived time flows slowly.

At critical points where parameters become orthogonal to the entropy gradient (\(\boldsymbol{\theta}^\top \nabla_\boldsymbol{\theta} S[\rho_\boldsymbol{\theta}] \approx 0\)), the time parameterization approaches singularity indicating phase transitions in the system’s information structure. }

Conjecture: Frieden-Analogous Extremal Flow

At points where the latent-to-active flow functional is locally extremal (e.g., $ $), the system may exhibit critical slowing where information resevoir variables are slow relative to active variables. It may be possible to separate the system entropy into active variables and, \(I = S[\rho_X]\) and “intrinsic information” \(J= S[\rho_{X|M}]\) allowing us to create an information analogous to B. Roy Frieden’s extreme physical information (Frieden (1998)) which allows derivation of locally valid differential equations that depend on the information topography.

From Maximum to Minimal Entropy

Jaynes formulated his principle in terms of maximizing entropy, we can also view certain problems as minimizing entropy under appropriate constraints. The duality becomes apparent when we consider the relationship between entropy and information.

The maximum entropy principle finds the distribution that is maximally noncommittal given certain constraints. Conversely, we can seek the distribution that minimizes entropy subject to different constraints - this represents the distribution with maximum structure or information.

Consider the uncertainty principle. When we seek states that minimize the product of position and momentum uncertainties, we are seeking minimal entropy states subject to the constraint of the uncertainty principle.

The mathematical formalism remains the same, but with different constraints and optimization direction, \[\begin{align} \text{Minimize } S_I &= -\sum_{i} p_i \log p_i \\ \text{subject to } \sum_{i} p_i &= 1 \\ \text{and } g_k(p_1, p_2, \ldots, p_n) &= G_k \quad k=1,2,\ldots,r, \end{align}\] where \(g_k\) are functions representing constraints different from simple averages.

The solution still takes the form of an exponential family, \[\begin{align} p_i = \frac{1}{Z}\exp\left(-\sum_{k=1}^r \mu_k \frac{\partial g_k}{\partial p_i}\right), \end{align}\] where \(\mu_k\) are Lagrange multipliers for the constraints.

Minimal Entropy States in Quantum Systems

The pure states of quantum mechanics are those that minimize von Neumann entropy \(S = -\text{Tr}(\rho \log \rho)\) subject to the constraints of quantum mechanics.

For example, coherent states minimize the entropy subject to constraints on the expectation values of position and momentum operators. These states achieve the minimum uncertainty allowed by quantum mechanics.

Histogram Game

To illustrate the concept of the Jaynes’ world entropy game we’ll run a simple example using a four bin histogram. The entropy of a four bin histogram can be computed as, \[ S(p) = - \sum_{i=1}^4 p_i \log_2 p_i. \]

import numpy as npFirst we write some helper code to plot the histogram and compute its entropy.

We can compute the entropy of any given histogram.

# Define probabilities

p = np.zeros(4)

p[0] = 4/13

p[1] = 3/13

p[2] = 3.7/13

p[3] = 1 - p.sum()

# Safe entropy calculation

nonzero_p = p[p > 0] # Filter out zeros

entropy = - (nonzero_p*np.log2(nonzero_p)).sum()

print(f"The entropy of the histogram is {entropy:.3f}.")

Figure: The entropy of a four bin histogram.

We can play the entropy game by starting with a histogram with all the probability mass in the first bin and then ascending the gradient of the entropy function.

Two-Bin Histogram Example

The simplest possible example of Jaynes’ World is a two-bin histogram with probabilities \(p\) and \(1-p\). This minimal system allows us to visualize the entire entropy landscape.

The natural parameter is the log odds, \(\theta = \log\frac{p}{1-p}\), and the update given by the entropy gradient is \[ \Delta \theta_{\text{steepest}} = \eta \frac{\text{d}S}{\text{d}\theta} = \eta p(1-p)(\log(1-p) - \log p). \] The Fisher information is \[ G(\theta) = p(1-p) \] This creates a dynamic where as \(p\) approaches either 0 or 1 (minimal entropy states), the Fisher information approaches zero, creating a critical slowing" effect. This critical slowing is what leads to the formation of information resevoirs. Note also that in the natural gradient the updated is given by multiplying the gradient by the inverse Fisher information, which would lead to a more efficient update of the form, \[ \Delta \theta_{\text{natural}} = \eta(\log(1-p) - \log p), \] however, it is this efficiency that we want our game to avoid, because it is the inefficient behaviour in the reagion of saddle points that leads to critical slowing and the emergence of information resevoirs.

import numpy as np# Python code for gradients

p_values = np.linspace(0.000001, 0.999999, 10000)

theta_values = np.log(p_values/(1-p_values))

entropy = -p_values * np.log(p_values) - (1-p_values) * np.log(1-p_values)

fisher_info = p_values * (1-p_values)

gradient = fisher_info * (np.log(1-p_values) - np.log(p_values))

Figure: Entropy gradients of the two bin histogram agains position.

This example reveals the entropy extrema at \(p = 0\), \(p = 0.5\), and \(p = 1\). At minimal entropy (\(p \approx 0\) or \(p \approx 1\)), the gradient approaches zero, creating natural information reservoirs. The dynamics slow dramatically near these points - these are the areas of critical slowing that create information reservoirs.

Gradient Ascent in Natural Parameter Space

We can visualize the entropy maximization process by performing gradient ascent in the natural parameter space \(\theta\). Starting from a low-entropy state, we follow the gradient of entropy with respect to \(\theta\) to reach the maximum entropy state.

import numpy as np# Parameters for gradient ascent

theta_initial = -9.0 # Start with low entropy

learning_rate = 1

num_steps = 1500

# Initialize

theta_current = theta_initial

theta_history = [theta_current]

p_history = [theta_to_p(theta_current)]

entropy_history = [entropy(theta_current)]

# Perform gradient ascent in theta space

for step in range(num_steps):

# Compute gradient

grad = entropy_gradient(theta_current)

# Update theta

theta_current = theta_current + learning_rate * grad

# Store history

theta_history.append(theta_current)

p_history.append(theta_to_p(theta_current))

entropy_history.append(entropy(theta_current))

if step % 100 == 0:

print(f"Step {step+1}: θ = {theta_current:.4f}, p = {p_history[-1]:.4f}, Entropy = {entropy_history[-1]:.4f}")

Figure: Evolution of the two-bin histogram during gradient ascent in natural parameter space.

Figure: Entropy evolution during gradient ascent for the two-bin histogram.

Figure: Gradient ascent trajectory in the natural parameter space for the two-bin histogram.

The gradient ascent visualization shows how the system evolves in the natural parameter space \(\theta\). Starting from a negative \(\theta\) (corresponding to a low-entropy state with \(p << 0.5\)), the system follows the gradient of entropy with respect to \(\theta\) until it reaches \(\theta = 0\) (corresponding to \(p = 0.5\)), which is the maximum entropy state.

Note that the maximum entropy occurs at \(\theta = 0\), which corresponds to \(p = 0.5\). The gradient of entropy with respect to \(\theta\) is zero at this point, making it a stable equilibrium for the gradient ascent process.

Uncertainty Principle

One challenge is how to parameterise our exponential family. We’ve mentioned that the variables \(Z\) are partitioned into observable variables \(X\) and memory variables \(M\). Given the minimal entropy initial state, the obvious initial choice is that at the origin all variables, \(Z\), should be in the information reservoir, \(M\). This implies that they are well determined and present a sensible choice for the source of our parameters.

We define a mapping, \(\boldsymbol{\theta}(M)\), that maps the information resevoir to a set of values that are equivalent to the natural parameters. If the entropy of these parameters is low, and the distribution \(\rho(\boldsymbol{\theta})\) is sharply peaked then we can move from treating the memory mapping, \(\boldsymbol{\theta}(\cdot)\), as a random processe to an assumption that it is a deterministic function. We can then follow gradients with respect to these \(\boldsymbol{\theta}\) values.

This allows us to rewrite the distribution over \(Z\) in a conditional form, \[ \rho(X|M) = h(X) \exp(\boldsymbol{\theta}(M)^\top T(X) - A(\boldsymbol{\theta}(M))). \]

Unfortunately this assumption implies that \(\boldsymbol{\theta}(\cdot)\) is a delta function, and since our representation as a compact manifold (bounded below by \(0\) and above by \(N\)) it does not admit any such singularities.

Formal Derivation of the Uncertainty Principle

We can derive the uncertainty principle formally from the information-theoretic properties of the system. Consider the mutual information between parameters \(\boldsymbol{\theta}(M)\) and capacity variables \(c(M)\): \[ I(\boldsymbol{\theta}(M); c(M)) = H(\boldsymbol{\theta}(M)) + H(c(M)) - H(\boldsymbol{\theta}(M), c(M)) \] where \(H(\cdot)\) represents differential entropy.

Since the total entropy of the system is bounded by \(N\), we know that \(h(\boldsymbol{\theta}(M), c(M)) \leq N\). Additionally, for any two random variables, the mutual information satisfies \(I(\boldsymbol{\theta}(M); c(M)) \geq 0\), with equality if and only if they are independent.

For our system to function as an effective information reservoir, \(\boldsymbol{\theta}(M)\) and \(c(M)\) cannot be independent - they must share information. This gives us, \[ h(\boldsymbol{\theta}(M)) + h(c(M)) \geq h(\boldsymbol{\theta}(M), c(M)) + I_{\min} \] where \(I_{\min} > 0\) is the minimum mutual information required for the system to function.

For variables with fixed variance, differential entropy is maximized by Gaussian distributions. For a multivariate Gaussian with covariance matrix \(\Sigma\), the differential entropy is: \[ h(\mathcal{N}(0, \Sigma)) = \frac{1}{2}\ln\left((2\pi e)^d|\Sigma|\right) \] where \(d\) is the dimensionality and \(|\Sigma|\) is the determinant of the covariance matrix.

The Cramér-Rao inequality provides a lower bound on the variance of any unbiased estimator. If \(\boldsymbol{\theta}\) is a parameter vector and \(\hat{\boldsymbol{\theta}}\) is an unbiased estimator, then: \[ \text{Cov}(\hat{\boldsymbol{\theta}}) \geq G^{-1}(\boldsymbol{\theta}) \] where \(G(\boldsymbol{\theta})\) is the Fisher information matrix.

In our context, the relationship between parameters \(\boldsymbol{\theta}(M)\) and capacity variables \(c(M)\) follows a similar bound. The Fisher information matrix for exponential family distributions has a special property: it equals the covariance of the sufficient statistics, which in our case are represented by the capacity variables \(c(M)\). This gives us \[ G(\boldsymbol{\theta}(M)) = \text{Cov}(c(M)) \]

Applying the Cramér-Rao inequality we have \[ \text{Cov}(\boldsymbol{\theta}(M)) \cdot \text{Cov}(c(M)) \geq G^{-1}(\boldsymbol{\theta}(M)) \cdot G(\boldsymbol{\theta}(M)) = \mathbf{I} \] where \(\mathbf{I}\) is the identity matrix.

For one-dimensional projections, this matrix inequality implies, \[ \text{Var}(\boldsymbol{\theta}(M)) \cdot \text{Var}(c(M)) \geq 1 \] and converting to standard deviations we have \[ \Delta\boldsymbol{\theta}(M) \cdot \Delta c(M) \geq 1. \]

When we incorporate the minimum mutual information constraint \(I_{\min}\), the bound tightens. Using the relationship between differential entropy and mutual information, we can derive \[ \Delta\boldsymbol{\theta}(M) \cdot \Delta c(M) \geq k, \] where \(k = \frac{1}{2\pi e}e^{2I_{\min}}\).

This is our uncertainty principle, directly derived from information-theoretic constraints and the Cramér-Rao bound. It represents the fundamental trade-off between precision in parameter specification and capacity for information storage.

Definition of Capacity Variables

We now provide a precise definition of the capacity variables \(c(M)\). The capacity variables quantify the potential of memory variables to store information about observable variables. Mathematically, we define \(c(M)\) as, \[ c(M) = \nabla_{\boldsymbol{\theta}} A(\boldsymbol{\theta}(M)) \] where \(A(\boldsymbol{\theta})\) is the log-partition function from our exponential family distribution. This definition has a clear interpretation: \(c(M)\) represents the expected values of the sufficient statistics under the current parameter values.

This definition also naturally yields the Fourier relationship between parameters and capacity. In exponential families, the log-partition function and its derivatives form a Legendre transform pair, which is the mathematical basis for the Fourier duality we claim. Specifically, if we define the Fourier transform operator \(\mathcal{F}\) as the mapping that takes parameters to expected sufficient statistics, then: \[ c(M) = \mathcal{F}[\boldsymbol{\theta}(M)] \]

Capacity \(\leftrightarrow\) Precision Paradox

This creates an apparent paradox, at minimal entropy states, the information reservoir must simultaneously maintain precision in the parameters \(\boldsymbol{\theta}(M)\) (for accurate system representation) but it must also provide sufficient capacity \(c(M)\) (for information storage).

The trade-off can be expressed as, \[ \Delta\boldsymbol{\theta}(M) \cdot \Delta c(M) \geq k, \] where \(k\) is a constant. This relationship can be recognised as a natural uncertainty principle that underpins the behaviour of the game. This principle is a necessary consequence of information theory. It follows from the requirement for the parameter-like states, \(M\) to have both precision and high capacity (in the Shannon sense ). The uncertainty principle ensures that when parameters are sharply defined (low \(\Delta\boldsymbol{\theta}\)), the capacity variables have high uncertainty (high \(\Delta c\)), allowing information to be encoded in their relationships rather than absolute values.

This trade-off between precision and capacity directly parallels Shannon’s insights about information transmission (Shannon, 1948), where he demonstrated that increasing the precision of a signal requires increasing bandwidth or reducing noise immunity—creating an inherent trade-off in any communication system. Our formulation extends this principle to the information reservoir’s parameter space.

In practice this means that the parameters \(\boldsymbol{\theta}(M)\) and capacity variables \(c(M)\) must form a Fourier-dual pair, \[ c(M) = \mathcal{F}[\boldsymbol{\theta}(M)], \] This duality becomes important at saddle points when direct gradient ascent stalls.

The mathematical formulation of the uncertainty principle comes from Hirschman Jr (1957) and later refined by Beckner (1975) and Białynicki-Birula and Mycielski (1975). These works demonstrated that Shannon’s information-theoretic entropy provides a natural framework for expressing the uncertainty principle, establishing a direct bridge between the mathematical formalism of quantum mechanics and information theory. Our capacity-precision trade-off follows this tradition, expressing the fundamental limits of information processing in our system.

Quantum vs Classical Information Reservoirs

The uncertainty principle means that the game can exhibit quantum-like information processing regimes during evolution. This inspires an information-theoretic perspective on the quantum-classical transition.

At minimal entropy states near the origin, the information reservoir has characteristics reminiscent of quantum systems.

Wave-like information encoding: The information reservoir near the origin necessarily encodes information in distributed, interference-capable patterns due to the uncertainty principle between parameters \(\boldsymbol{\theta}(M)\) and capacity variables \(c(M)\).

Non-local correlations: Parameters are highly correlated through the Fisher information matrix, creating structures where information is stored in relationships rather than individual variables.

Uncertainty-saturated regime: The uncertainty relationship \(\Delta\boldsymbol{\theta}(M) \cdot \Delta c(M) \geq k\) is nearly saturated (approaches equality), similar to Heisenberg’s uncertainty principle in quantum systems and the entropic uncertainty relations established by Białynicki-Birula and Mycielski (1975).

As the system evolves towards higher entropy states, a transition occurs where some variables exhibit classical behavior.

From wave-like to particle-like: Variables transitioning from \(M\) to \(X\) shift from storing information in interference patterns to storing it in definite values with statistical uncertainty.

Decoherence-like process: The uncertainty product \(\Delta\boldsymbol{\theta}(M) \cdot \Delta c(M)\) for these variables grows significantly larger than the minimum value \(k\), indicating a departure from quantum-like behavior.

Local information encoding: Information becomes increasingly encoded in local variables rather than distributed correlations.

The saddle points in our entropy landscape mark critical transitions between quantum-like and classical information processing regimes. Near these points

The critically slowed modes maintain quantum-like characteristics, functioning as coherent memory that preserves information through interference patterns.

The rapidly evolving modes exhibit classical characteristics, functioning as incoherent processors that manipulate information through statistical operations.

This natural separation creates a hybrid computational architecture where quantum-like memory interfaces with classical-like processing.

The quantum-classical transition can be quantified using the moment generating function \(M_Z(t)\). In quantum-like regimes, the MGF exhibits oscillatory behavior with complex analytic structure, whereas in classical regimes, it grows monotonically with simple analytic structure. The transition between these behaviors identifies variables moving between quantum-like and classical information processing modes.

This perspective suggests that what we recognize as “quantum” versus “classical” behavior may fundamentally reflect different regimes of information processing - one optimized for coherent information storage (quantum-like) and the other for flexible information manipulation (classical-like). The emergence of both regimes from our entropy-maximizing model indicates that nature may exploit this computational architecture to optimize information processing across multiple scales.

This formulation of the uncertainty principle in terms of information capacity and parameter precision follows the tradition established by Shannon (1948) and expanded upon by Hirschman Jr (1957) and others who connected information entropy uncertainty to Heisenberg’s uncertainty.

Quantitative Demonstration

We can demonstrate this principle quantitatively through a simple model. Consider a two-dimensional system with memory variables \(M = (m_1, m_2)\) that map to parameters \(\boldsymbol{\theta}(M) = (\theta_1(m_1), \theta_2(m_2))\). The capacity variables are \(c(M) = (c_1(m_1), c_2(m_2))\).

At minimal entropy, when the system is near the origin, the uncertainty product is exactly: \[ \Delta\theta_i(m_i) \cdot \Delta c_i(m_i) = k \] for each dimension \(i\).

As the system evolves and entropy increases, some variables transition to classical behavior with: \[ \Delta\theta_i(m_i) \cdot \Delta c_i(m_i) \gg k \]

This increased product reflects the transition from quantum-like to classical information processing. The variables that maintain the minimal uncertainty product \(k\) continue to function as coherent information reservoirs, while those with larger uncertainty products function as classical processors.

This principle provides testable predictions for any system modeled as an information reservoir. Specifically, we predict that variables functioning as effective memory must demonstrate precision-capacity trade-offs near the theoretical minimum \(k\), while processing variables will show excess uncertainty above this minimum.

Maximum Entropy and Density Matrices

In Jaynes (1957) Jaynes showed how the maximum entropy formalism is applied, in later papers such as Jaynes (1963) he showed how his maximum entropy formalism could be applied to von Neumann entropy of a density matrix.

As Jaynes noted in his 1962 Brandeis lectures: “Assignment of initial probabilities must, in order to be useful, agree with the initial information we have (i.e., the results of measurements of certain parameters). For example, we might know that at time \(t = 0\), a nuclear spin system having total (measured) magnetic moment \(M(0)\), is placed in a magnetic field \(H\), and the problem is to predict the subsequent variation \(M(t)\)… What initial density matrix for the spin system \(\rho(0)\), should we use?”

Jaynes recognized that we should choose the density matrix that maximizes the von Neumann entropy, \[\begin{align} S = -\text{Tr}(\rho \log \rho), \end{align}\] subject to constraints from our measurements, \[\begin{align} \text{Tr}(\rho M_{op}) = M(0), \end{align}\] where \(M_{op}\) is the operator corresponding to total magnetic moment.

The solution is the quantum version of the maximum entropy distribution, \[\begin{align} \rho = \frac{1}{Z}\exp(-\lambda_1 A_1 - \lambda_2 A_2 - \cdots - \lambda_m A_m), \end{align}\] where \(A_i\) are the operators corresponding to measured observables, \(\lambda_i\) are Lagrange multipliers, and \(Z = \text{Tr}[\exp(-\lambda_1 A_1 - \cdots - \lambda_m A_m)]\) is the partition function.

This unifies classical entropies and density matrix entropies under the same information-theoretic principle. It clarifies that quantum states with minimum entropy (pure states) represent maximum information, while mixed states represent incomplete information.

Jaynes further noted that “strictly speaking, all this should be restated in terms of quantum theory using the density matrix formalism. This will introduce the \(N!\) permutation factor, a natural zero for entropy, alteration of numerical values if discreteness of energy levels becomes comparable to \(k_BT\), etc.”

Quantum States and Exponential Families

The minimal entropy quantum states provides a connection between density matrices and exponential family distributions. This connection enables us to use many of the classical techniques from information geometry and apply them to the game in the case where the uncertainty principle is present.

The minimal entropy density matrix belongs to an exponential family, just like many classical distributions,

Classical Exponential Family

\[ f(x; \theta) = h(x) \cdot \exp[\eta(\theta)^\top \cdot T(x) - A(\theta)] \]

Quantum Minimal Entropy State

\[ \rho = \exp(-\mathbf{R}^\top \cdot \mathbf{G} \cdot \mathbf{R} - Z) \]

- Both have an exponential form

- Both involve sufficient statistics (in the quantum case, these are quadratic forms of operators)

- Both have natural parameters (G in the quantum case)

- Both include a normalization term

The matrix \(G\) in the minimal entropy state is directly related to the ‘quantum Fisher information matrix,’ \[ \mathbf{G} = \text{QFIM}/4 \] where QFIM is the quantum Fisher information matrix, which quantifies how sensitively the state responds to parameter changes.

This creates a link between

- Minimal entropy (maximum order)

- Uncertainty (fundamental quantum limitations)

- Information (ability to estimate parameters precisely)

The relationship implies, \[ V \cdot \text{QFIM} \geq \frac{\hbar^2}{4} \] which connects the covariance matrix (uncertainties) to the Fisher information (precision in parameter estimation).

These minimal entropy states may have physical relationships to interpretations squeezed states in quantum optics. They are the states that achieve the ultimate precision allowed by quantum mechanics.

Minimal Entropy States

In Jaynes’ World, we begin at a minimal entropy configuration - the “origin” state. Understanding the properties of these minimal entropy states is crucial for characterizing how the system evolves. These states are constrained by the uncertainty principle we previously identified: \(\Delta\boldsymbol{\theta}(M) \cdot \Delta c(M) \geq k\).

This constraint is reminiscient of the Heisenberg uncertainty principle in quantum mechanics, where \(\Delta x \cdot \Delta p \geq \hbar/2\). This isn’t a coincidence - both represent limitations on precision arising from the mathematical structure of information.

Structure of Minimal Entropy States

The minimal entropy configuration under the uncertainty constraint takes a specific mathematical form. It is a pure state (in the sense of having minimal possible entropy, \(S(Z) = 0\)) that exactly saturates the uncertainty bound. For a system with multiple degrees of freedom, the distribution takes a Gaussian form, \[ \rho(Z) = \frac{1}{\mathcal{Z}}\exp(-\mathbf{R}^\top \cdot \boldsymbol{\Lambda} \cdot \mathbf{R}), \] where \(\mathbf{R}\) represents the vector of all variables, \(\boldsymbol{\Lambda}\) is the precision matrix (inverse covariance) constrained by the uncertainty principle, and \(\mathcal{Z}\) is the partition function (normalization constant).

This form is an exponential family distribution, in line with Jaynes’ principle that entropy-optimized distributions belong to the exponential family. The precision matrix \(\boldsymbol{\Lambda}\) determines how uncertainty is distributed among different variables and their correlations. Importantly, while minimal entropy states have \(S(Z) = 0\), the total entropy of the system is constrained to be between 0 and \(N\) as it evolves, forming a compact manifold with respect to its parameters. This upper bound \(N\) ensures that as the system evolves from minimal to maximal entropy, it remains within a well-defined entropy space.

Fisher Information and Minimal Uncertainty

There’s a connection between the precision matrix \(\boldsymbol{\Lambda}\) and the Fisher information matrix \(\mathbf{G}\). For a multivariate Gaussian distribution, the Fisher information matrix is proportional to the precision matrix: \(\mathbf{G} = \boldsymbol{\Lambda}/2\). This creates the relationship, \[ \mathbf{V} \cdot \mathbf{G} \geq k^2 \] where \(\mathbf{V}\) is the covariance matrix containing all uncertainties and correlations. This inequality connects the covariance (uncertainties) to the Fisher information (precision in parameter estimation).

Connection to Information Reservoirs

Implications for System Evolution

As Jaynes’ World evolves from its minimal entropy state toward maximum entropy, we expect transitions to occur, Uncertainty desaturation: The uncertainty relationship \(\Delta\boldsymbol{\theta}(M) \cdot \Delta c(M) \geq k\) becomes less tightly saturated, with the product growing larger than the minimum value.

Physical Interpretation

Understanding these minimal entropy states provides insight into the starting point of Jaynes’ World and illuminates how the system will evolve toward maximum entropy. The uncertainty principle we’ve identified represents not just a mathematical constraint but a fundamental limitation on how information can be structured in any system.

The concept of minimal entropy states has an analog in quantum mechanics. What is the most ordered state possible that still respects the quantum uncertainty principle?

In quantum mechanics, a system’s state is described by a density matrix \(\rho\), which is analogous to a probability distribution in classical statistics. Key properties include,

- Hermitian: \(\rho = \rho^\dagger\) (like how probability distributions are real-valued)

- Positive semi-definite: \(\rho \geq 0\) (probabilities can’t be negative)

- Unit trace: \(\text{Tr}(\rho) = 1\) (total probability sums to 1)

For pure quantum states (states with complete information), \(\rho = |\psi\rangle\langle\psi|\) where \(|\psi\rangle\) is a state vector.

The density matrix analog of Shannon entropy is von Neumann entropy, \[ S(\rho) = -\text{Tr}(\rho \ln \rho) \] This measures the amount of “mixedness” or uncertainty in a quantum state. Pure states have zero entropy, representing complete certainty about the quantum state (within the constraints of quantum mechanics). Mixed states have positive entropy, indicating some level of classical uncertainty.

The uncertainty principle imposes fundamental limits on precision through the uncertainty principle. For position (\(x\)) and momentum (\(p\)), \[ \Delta x \cdot \Delta p \geq \frac{\hbar}{2} \] where \(\hbar\) (Planck’s constant divided by \(2\pi\)) sets the scale of quantum effects.

For multiple variables, this generalizes to a matrix inequality, \[ V + i\frac{\hbar}{2}\Omega \geq 0, \] where \(V\) is the covariance matrix containing all the uncertainties and correlations, and \(\Omega\) is the symplectic form representing the canonical commutation relations.

This constraint creates an irreducible minimum to the uncertainty possible in the system, it establishes the minimum “information state” of the system.

Density Matrices and Exponential Families

The minimal entropy state provides a connection between density matrices and exponential family distributions. This connection enables us to use many of the classical techniques from information geometry and apply them to the game in the case where the uncertainty principle is present.

The minimal entropy density matrix belongs to an exponential family, just like many classical distributions.

- Both have an exponential form

- Both involve sufficient statistics (in the quantum case, these are quadratic forms of operators)

- Both have natural parameters (\(G\) in the quantum case)

- Both include a normalization term

Classical Exponential Family

\[ f(x; \theta) = h(x) \cdot \exp[\eta(\theta)^\top \cdot T(x) - A(\theta)] \]

Quantum Minimal Entropy State

\[ \rho = \exp(-\mathbf{R}^\top \cdot \mathbf{G} \cdot \mathbf{R} - Z) \]

Gradient Ascent and Uncertainty Principles

In our exploration of information dynamics, we now turn to the relationship between gradient ascent on entropy and uncertainty principles. This section demonstrates how systems naturally evolve from quantum-like states (with minimal uncertainty) toward classical-like states (with excess uncertainty) through entropy maximization.

For simplicity, we’ll focus on multivariate Gaussian distributions, where the uncertainty relationships are particularly elegant. In this setting, the precision matrix \(\Lambda\) (inverse of the covariance matrix) fully characterizes the distribution. The entropy of a multivariate Gaussian is directly related to the determinant of the covariance matrix, \[ S = \frac{1}{2}\log\det(V) + \text{constant}, \] where \(V = \Lambda^{-1}\) is the covariance matrix.

For conjugate variables like position and momentum, the Heisenberg uncertainty principle imposes constraints on the minimum product of their uncertainties. In our information-theoretic framework, this appears as a constraint on the determinant of certain submatrices of the covariance matrix.

import numpy as np

from scipy.linalg import eigh

import matplotlib.pyplot as plt

from matplotlib.patches import EllipseThe code below implements gradient ascent on the entropy of a multivariate Gaussian system while respecting uncertainty constraints. We’ll track how the system evolves from minimal uncertainty states (quantum-like) to states with excess uncertainty (classical-like).

First, we define key functions for computing entropy and its gradient.

# Constants

hbar = 1.0 # Normalized Planck's constant

min_uncertainty_product = hbar/2The compute_entropy function calculates the entropy of a multivariate Gaussian distribution from its precision matrix. The compute_entropy_gradient function computes the gradient of entropy with respect to the precision matrix, which is essential for our gradient ascent procedure.

Next, we implement functions to handle the constraints imposed by the uncertainty principle:

The project_gradient function ensures that our gradient ascent respects the uncertainty principle by projecting the gradient to maintain minimum uncertainty products when necessary. The initialize_multidimensional_state function creates a starting state with multiple position-momentum pairs, each initialized to the minimum uncertainty allowed by the uncertainty principle, but with different “squeeze factors” that determine the shape of the uncertainty ellipse.

Now we implement the main gradient ascent procedure.

# Perform gradient ascent on entropy

def gradient_ascent_entropy(Lambda_init, n_steps=100, learning_rate=0.01):

"""

Perform gradient ascent on entropy while respecting uncertainty constraints.

Parameters:

-----------

Lambda_init: array

Initial precision matrix

n_steps: int

Number of gradient steps

learning_rate: float

Learning rate for gradient ascent

Returns:

--------

Lambda_history: list

History of precision matrices

entropy_history: list

History of entropy values

"""

Lambda = Lambda_init.copy()

Lambda_history = [Lambda.copy()]

entropy_history = [compute_entropy(Lambda)]

for step in range(n_steps):

# Compute gradient of entropy

grad_matrix = compute_entropy_gradient(Lambda)

# Diagonalize Lambda to work with eigenvalues

eigenvalues, eigenvectors = eigh(Lambda)

# Transform gradient to eigenvalue space

grad = np.diag(eigenvectors.T @ grad_matrix @ eigenvectors)

# Project gradient to respect constraints

proj_grad = project_gradient(eigenvalues, grad)

# Update eigenvalues

eigenvalues += learning_rate * proj_grad

# Ensure eigenvalues remain positive

eigenvalues = np.maximum(eigenvalues, 1e-10)

# Reconstruct Lambda from updated eigenvalues

Lambda = eigenvectors @ np.diag(eigenvalues) @ eigenvectors.T

# Store history

Lambda_history.append(Lambda.copy())

entropy_history.append(compute_entropy(Lambda))

return Lambda_history, entropy_historyThe gradient_ascent_entropy function implements the core optimization procedure. It performs gradient ascent on the entropy while respecting the uncertainty constraints. The algorithm works in the eigenvalue space of the precision matrix, which makes it easier to enforce constraints and ensure the matrix remains positive definite.

To analyze the results, we implement functions to track uncertainty metrics and detect interesting dynamics:

# Track uncertainty products and regime classification

def track_uncertainty_metrics(Lambda_history):

"""

Track uncertainty products and classify regimes for each conjugate pair.

Parameters:

-----------

Lambda_history: list

History of precision matrices

Returns:

--------

metrics: dict

Dictionary containing uncertainty metrics over time

"""

n_steps = len(Lambda_history)

n_pairs = Lambda_history[0].shape[0] // 2

# Initialize tracking arrays

uncertainty_products = np.zeros((n_steps, n_pairs))

regimes = np.zeros((n_steps, n_pairs), dtype=object)

for step, Lambda in enumerate(Lambda_history):

# Get covariance matrix

V = np.linalg.inv(Lambda)

# Calculate Fisher information matrix

G = Lambda / 2

# For each conjugate pair

for i in range(n_pairs):

# Extract 2x2 submatrix for this pair

idx1, idx2 = 2*i, 2*i+1

V_sub = V[np.ix_([idx1, idx2], [idx1, idx2])]

# Compute uncertainty product (determinant of submatrix)

uncertainty_product = np.sqrt(np.linalg.det(V_sub))

uncertainty_products[step, i] = uncertainty_product

# Classify regime

if abs(uncertainty_product - min_uncertainty_product) < 0.1*min_uncertainty_product:

regimes[step, i] = "Quantum-like"

else:

regimes[step, i] = "Classical-like"

return {

'uncertainty_products': uncertainty_products,

'regimes': regimes

}The track_uncertainty_metrics function analyzes the evolution of uncertainty products for each position-momentum pair and classifies them as either “quantum-like” (near minimum uncertainty) or “classical-like” (with excess uncertainty). This classification helps us understand how the system transitions between these regimes during entropy maximization.

We also implement a function to detect saddle points in the gradient flow, which are critical for understanding the system’s dynamics:

# Detect saddle points in the gradient flow

def detect_saddle_points(Lambda_history):

"""

Detect saddle-like behavior in the gradient flow.

Parameters:

-----------

Lambda_history: list

History of precision matrices

Returns:

--------

saddle_metrics: dict

Metrics related to saddle point behavior

"""

n_steps = len(Lambda_history)

n_pairs = Lambda_history[0].shape[0] // 2

# Track eigenvalues and their gradients

eigenvalues_history = np.zeros((n_steps, 2*n_pairs))

gradient_ratios = np.zeros((n_steps, n_pairs))

for step, Lambda in enumerate(Lambda_history):

# Get eigenvalues

eigenvalues, _ = eigh(Lambda)

eigenvalues_history[step] = eigenvalues

# For each pair, compute ratio of gradients

if step > 0:

for i in range(n_pairs):

idx1, idx2 = 2*i, 2*i+1

# Change in eigenvalues

delta1 = abs(eigenvalues_history[step, idx1] - eigenvalues_history[step-1, idx1])

delta2 = abs(eigenvalues_history[step, idx2] - eigenvalues_history[step-1, idx2])

# Ratio of max to min (high ratio indicates saddle-like behavior)

max_delta = max(delta1, delta2)

min_delta = max(1e-10, min(delta1, delta2)) # Avoid division by zero

gradient_ratios[step, i] = max_delta / min_delta

# Identify candidate saddle points (where some gradients are much larger than others)

saddle_candidates = []

for step in range(1, n_steps):

if np.any(gradient_ratios[step] > 10): # Threshold for saddle-like behavior

saddle_candidates.append(step)

return {

'eigenvalues_history': eigenvalues_history,

'gradient_ratios': gradient_ratios,

'saddle_candidates': saddle_candidates

}The detect_saddle_points function identifies points in the gradient flow where some eigenvalues change much faster than others, indicating saddle-like behavior. These saddle points are important because they represent critical transitions in the system’s evolution.

Finally, we implement visualization functions to help us understand the system’s behavior:

# Visualize uncertainty ellipses for multiple pairs

def plot_multidimensional_uncertainty(Lambda_history, step_indices, pairs_to_plot=None):

"""

Plot the evolution of uncertainty ellipses for multiple position-momentum pairs.

Parameters:

-----------

Lambda_history: list

History of precision matrices

step_indices: list

Indices of steps to visualize

pairs_to_plot: list, optional

Indices of position-momentum pairs to plot

"""

n_pairs = Lambda_history[0].shape[0] // 2

if pairs_to_plot is None:

pairs_to_plot = range(min(3, n_pairs)) # Plot up to 3 pairs by default

fig, axes = plt.subplots(len(pairs_to_plot), len(step_indices),

figsize=(4*len(step_indices), 3*len(pairs_to_plot)))

# Handle case of single pair or single step

if len(pairs_to_plot) == 1:

axes = axes.reshape(1, -1)

if len(step_indices) == 1:

axes = axes.reshape(-1, 1)

for row, pair_idx in enumerate(pairs_to_plot):

for col, step in enumerate(step_indices):

ax = axes[row, col]

Lambda = Lambda_history[step]

covariance = np.linalg.inv(Lambda)

# Extract 2x2 submatrix for this pair

idx1, idx2 = 2*pair_idx, 2*pair_idx+1

cov_sub = covariance[np.ix_([idx1, idx2], [idx1, idx2])]

# Get eigenvalues and eigenvectors of submatrix

values, vectors = eigh(cov_sub)

# Calculate ellipse parameters

angle = np.degrees(np.arctan2(vectors[1, 0], vectors[0, 0]))

width, height = 2 * np.sqrt(values)

# Create ellipse

ellipse = Ellipse((0, 0), width=width, height=height, angle=angle,

edgecolor='blue', facecolor='lightblue', alpha=0.5)

# Add to plot

ax.add_patch(ellipse)

ax.set_xlim(-3, 3)

ax.set_ylim(-3, 3)

ax.set_aspect('equal')

ax.grid(True)

# Add minimum uncertainty circle

min_circle = plt.Circle((0, 0), min_uncertainty_product,

fill=False, color='red', linestyle='--')

ax.add_patch(min_circle)

# Compute uncertainty product

uncertainty_product = np.sqrt(np.linalg.det(cov_sub))

# Determine regime

if abs(uncertainty_product - min_uncertainty_product) < 0.1*min_uncertainty_product:

regime = "Quantum-like"

color = 'red'

else:

regime = "Classical-like"

color = 'blue'

# Add labels

if row == 0:

ax.set_title(f"Step {step}")

if col == 0:

ax.set_ylabel(f"Pair {pair_idx+1}")

# Add uncertainty product text

ax.text(0.05, 0.95, f"ΔxΔp = {uncertainty_product:.2f}",

transform=ax.transAxes, fontsize=10, verticalalignment='top')

# Add regime text