The Atomic Human

Abstract

A vital perspective is missing from the discussions we’re having about Artificial Intelligence: what does it mean for our identity?

Our fascination with AI stems from the perceived uniqueness of human intelligence. We believe it’s what differentiates us. Fears of AI not only concern how it invades our digital lives, but also the implied threat of an intelligence that displaces us from our position at the centre of the world.

Atomism, proposed by Democritus, suggested it was impossible to continue dividing matter down into ever smaller components: eventually we reach a point where a cut cannot be made (the Greek for uncuttable is ‘atom’). In the same way, by slicing away at the facets of human intelligence that can be replaced by machines, AI uncovers what is left: an indivisible core that is the essence of humanity.

I’ll contrast our own (evolved, locked-in, embodied) intelligence with the capabilities of machine intelligence and speculate on what it means for our futures.

This talk is based on Neil’s book “The Atomic Human.”

Henry Ford’s Faster Horse

Figure: A 1925 Ford Model T built at Henry Ford’s Highland Park Plant in Dearborn, Michigan. This example now resides in Australia, owned by the founder of FordModelT.net. From https://commons.wikimedia.org/wiki/File:1925_Ford_Model_T_touring.jpg

It’s said that Henry Ford’s customers wanted a “a faster horse.” If Henry Ford was selling us artificial intelligence today, what would the customer call for, “a smarter human?” That’s certainly the picture of machine intelligence we find in science fiction narratives, but the reality of what we’ve developed is much more mundane.

Car engines produce prodigious power from petrol. Machine intelligences deliver decisions derived from data. In both cases the scale of consumption enables a speed of operation that is far beyond the capabilities of their natural counterparts. Unfettered energy consumption has consequences in the form of climate change. Does unbridled data consumption also have consequences for us?

If we devolve decision making to machines, we depend on those machines to accommodate our needs. If we don’t understand how those machines operate, we lose control over our destiny. Our mistake has been to see machine intelligence as a reflection of our intelligence. We cannot understand the smarter human without understanding the human. To understand the machine, we need to better understand ourselves.

Artificial General Vehicle

Artificial General Vehicle

Figure: The notion of artificial general intelligence is as absurd as the notion of an artificial general vehicle - no single vehicle is optimal for every journey. (Illustration by Dan Andrews inspired by a conversation about “The Atomic Human” Lawrence (2024))

This illustration was created by Dan Andrews inspired by a conversation about “The Atomic Human” book. The drawing emerged from discussions with Dan about the flawed concept of artificial general intelligence and how it parallels the absurd idea of a single vehicle optimal for all journeys. The vehicle itself is inspired by shared memories of Professor Pat Pending in Hanna Barbera’s Wacky Races.

I often turn up to talks with my Brompton bicycle. Embarrassingly I even took it to Google which is only a 30 second walk from King’s Cross station. That made me realise it’s become a sort of security blanket. I like having it because it’s such a flexible means of transport.

But is the Brompton an “artificial general vehicle?” A vehicle that can do everything? Unfortunately not, for example it’s not very good for flying to the USA. There is no artificial general vehicle that is optimal for every journey. Similarly there is no such thing as artificial general intelligence. The idea is artificial general nonsense.

That doesn’t mean there aren’t different principles to intelligence we can look at. Just like vehicles have principles that apply to them. When designing vehicles we need to think about air resistance, friction, power. We have developed solutions such as wheels, different types of engines and wings that are deployed across different vehicles to achieve different results.

Intelligence is similar. The notion of artificial general intelligence is fundamentally eugenic. It builds on Spearman’s term “general intelligence” which is part of a body of literature that was looking to assess intelligence in the way we assess height. The objective then being to breed greater intelligences (Lyons, 2022).

The Atomic Human

Figure: The Atomic Eye, by slicing away aspects of the human that we used to believe to be unique to us, but are now the preserve of the machine, we learn something about what it means to be human.

The development of what some are calling intelligence in machines, raises questions around what machine intelligence means for our intelligence. The idea of the atomic human is derived from Democritus’s atomism.

In the fifth century bce the Greek philosopher Democritus posed a question about our physical universe. He imagined cutting physical matter into pieces in a repeated process: cutting a piece, then taking one of the cut pieces and cutting it again so that each time it becomes smaller and smaller. Democritus believed this process had to stop somewhere, that we would be left with an indivisible piece. The Greek word for indivisible is atom, and so this theory was called atomism.

The Atomic Human considers the same question, but in a different domain, asking: As the machine slices away portions of human capabilities, are we left with a kernel of humanity, an indivisible piece that can no longer be divided into parts? Or does the human disappear altogether? If we are left with something, then that uncuttable piece, a form of atomic human, would tell us something about our human spirit.

See Lawrence (2024) atomic human, the p. 13.

Embodiment Factors: Walking vs Light Speed

Imagine human communication as moving at walking pace. The average person speaks about 160 words per minute, which is roughly 2000 bits per minute. If we compare this to walking speed, roughly 1 m/s we can think of this as the speed at which our thoughts can be shared with others.

Compare this to machines. When computers communicate, their bandwidth is 600 billion bits per minute. Three hundred million times faster than humans or the equiavalent of \(3 \times 10 ^{8}\). In twenty minutes we could be a kilometer down the road, where as the computer can go to the Sun and back again..

This difference is not just only about speed of communication, but about embodiment. Our intelligence is locked in by our biology: our brains may process information rapidly, but our ability to share those thoughts is limited to the slow pace of speech or writing. Machines, in comparison, seem able to communicate their computations almost instantaneously, anywhere.

So, the embodiment factor is the ratio between the time it takes to think a thought and the time it takes to communicate it. For us, it’s like walking; for machines, it’s like moving at light speed. This difference means that most direct comparisons between human and machine need to be carefully made. Because for humans not the size of our communication bandwidth that counts, but it’s how we overcome that limitation..

Figure: Conversation relies on internal models of other individuals.

Figure: Misunderstanding of context and who we are talking to leads to arguments.

Embodiment factors imply that, in our communication between humans, what is not said is, perhaps, more important than what is said. To communicate with each other we need to have a model of who each of us are.

To aid this, in society, we are required to perform roles. Whether as a parent, a teacher, an employee or a boss. Each of these roles requires that we conform to certain standards of behaviour to facilitate communication between ourselves.

Control of self is vitally important to these communications.

The consequences between this mismatch of power and delivery are to be seen all around us. Because, just as driving an F1 car with bicycle wheels would be a fine art, so is the process of communication between humans.

If I have a thought and I wish to communicate it, I first need to have a model of what you think. I should think before I speak. When I speak, you may react. You have a model of who I am and what I was trying to say, and why I chose to say what I said. Now we begin this dance, where we are each trying to better understand each other and what we are saying. When it works, it is beautiful, but when mis-deployed, just like a badly driven F1 car, there is a horrible crash, an argument.

Figure: This is the drawing Dan was inspired to create for Chapter 1. It captures the fundamentally narcissistic nature of our (societal) obsession with our intelligence.

See blog post on Dan Andrews image of our reflective obsession with AI.. See also (Vallor, 2024).

Figure: This is the drawing Dan was inspired to create for Chapter 2. It captures part of the narrative where human actors choose to follow the spirit of their instructions, and are empowered to do so because they have wider context. They can operate autonomously. Whereas machines have typically operated on data and statistics and can’t bring in context so normally operate as automatons.

See blog post on Dan Andrews image of narratives vs statistics..

New Flow of Information

Classically the field of statistics focused on mediating the relationship between the machine and the human. Our limited bandwidth of communication means we tend to over-interpret the limited information that we are given, in the extreme we assign motives and desires to inanimate objects (a process known as anthropomorphizing). Much of mathematical statistics was developed to help temper this tendency and understand when we are valid in drawing conclusions from data.

Figure: The trinity of human, data, and computer, and highlights the modern phenomenon. The communication channel between computer and data now has an extremely high bandwidth. The channel between human and computer and the channel between data and human is narrow. New direction of information flow, information is reaching us mediated by the computer. The focus on classical statistics reflected the importance of the direct communication between human and data. The modern challenges of data science emerge when that relationship is being mediated by the machine.

Data science brings new challenges. In particular, there is a very large bandwidth connection between the machine and data. This means that our relationship with data is now commonly being mediated by the machine. Whether this is in the acquisition of new data, which now happens by happenstance rather than with purpose, or the interpretation of that data where we are increasingly relying on machines to summarize what the data contains. This is leading to the emerging field of data science, which must not only deal with the same challenges that mathematical statistics faced in tempering our tendency to over interpret data but must also deal with the possibility that the machine has either inadvertently or maliciously misrepresented the underlying data.

See Lawrence (2024) topography, information p. 34-9, 43-8, 57, 62, 104, 115-16, 127, 140, 192, 196, 199, 291, 334, 354-5. See Lawrence (2024) anthropomorphization (‘anthrox’) p. 30-31, 90-91, 93-4, 100, 132, 148, 153, 163, 216-17, 239, 276, 326, 342.

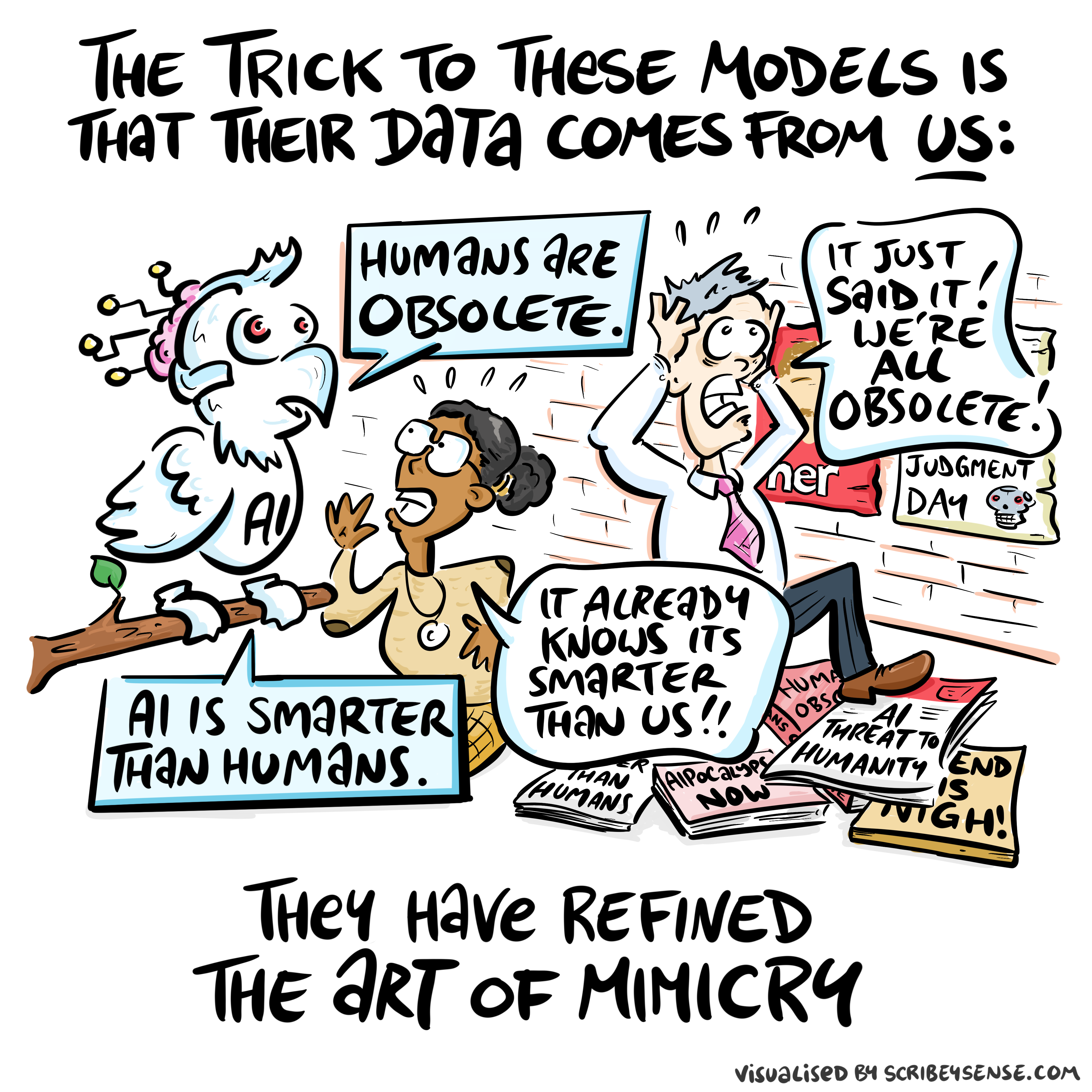

Their Data Comes from Us

Stochastic Parrots

Figure: The nature of digital communication has changed dramatically, with machines processing vast amounts of human-generated data and feeding it back to us in ways that can distort our information landscape. (Illustration by Dan Andrews inspired by Chapter 5 “Enlightenment” of “The Atomic Human” Lawrence (2024))

This illustration was created by Dan Andrews after reading Chapter 5 “Enlightenment” of “The Atomic Human” book. The chapter examines how machine learning models like GPTs ingest vast amounts of human-created data and then reflect modified versions back to us. The drawing celebrates the stochastic parrots paper by Bender et al. (2021) while also capturing how this feedback loop of information can distort our digital discourse.

See blog post on Two Types of Stochastic Parrots.

The stochastic parrots paper (Bender et al., 2021) was the moment that the research community, through a group of brave researchers, some of whom paid with their jobs, raised the first warnings about these technologies. Despite their bravery, at least in the UK, their voices and those of many other female researchers were erased from the public debate around AI.

Their voices were replaced by a different type of stochastic parrot, a group of “fleshy GPTs” that speak confidently and eloquently but have little experience of real life and make arguments that, for those with deeper knowledge are flawed in naive and obvious ways.

The story is a depressing reflection of a similar pattern that undermined the UK computer industry Hicks (2018).

We all have a tendency to fall into the trap of becoming fleshy GPTs, and the best way to prevent that happening is to gather diverse voices around ourselves and take their perspectives seriously even when we might instinctively disagree.

Sunday Times article “Our lives may be enhanced by AI, but Big Tech just sees dollar signs”

Times article “Don’t expect AI to just fix everything, professor warns”

Blake’s Newton

William Blake’s rendering of Newton captures humans in a particular state. He is trance-like absorbed in simple geometric shapes. The feel of dreams is enhanced by the underwater location, and the nature of the focus is enhanced because he ignores the complexity of the sea life around him.

Figure: William Blake’s Newton. 1795c-1805

See Lawrence (2024) Blake, William Newton p. 121–123.

The caption in the Tate Britain reads:

Here, Blake satirises the 17th-century mathematician Isaac Newton. Portrayed as a muscular youth, Newton seems to be underwater, sitting on a rock covered with colourful coral and lichen. He crouches over a diagram, measuring it with a compass. Blake believed that Newton’s scientific approach to the world was too reductive. Here he implies Newton is so fixated on his calculations that he is blind to the world around him. This is one of only 12 large colour prints Blake made. He seems to have used an experimental hybrid of printing, drawing, and painting.

From the Tate Britain

See Lawrence (2024) Blake, William Newton p. 121–123, 258, 260, 283, 284, 301, 306.

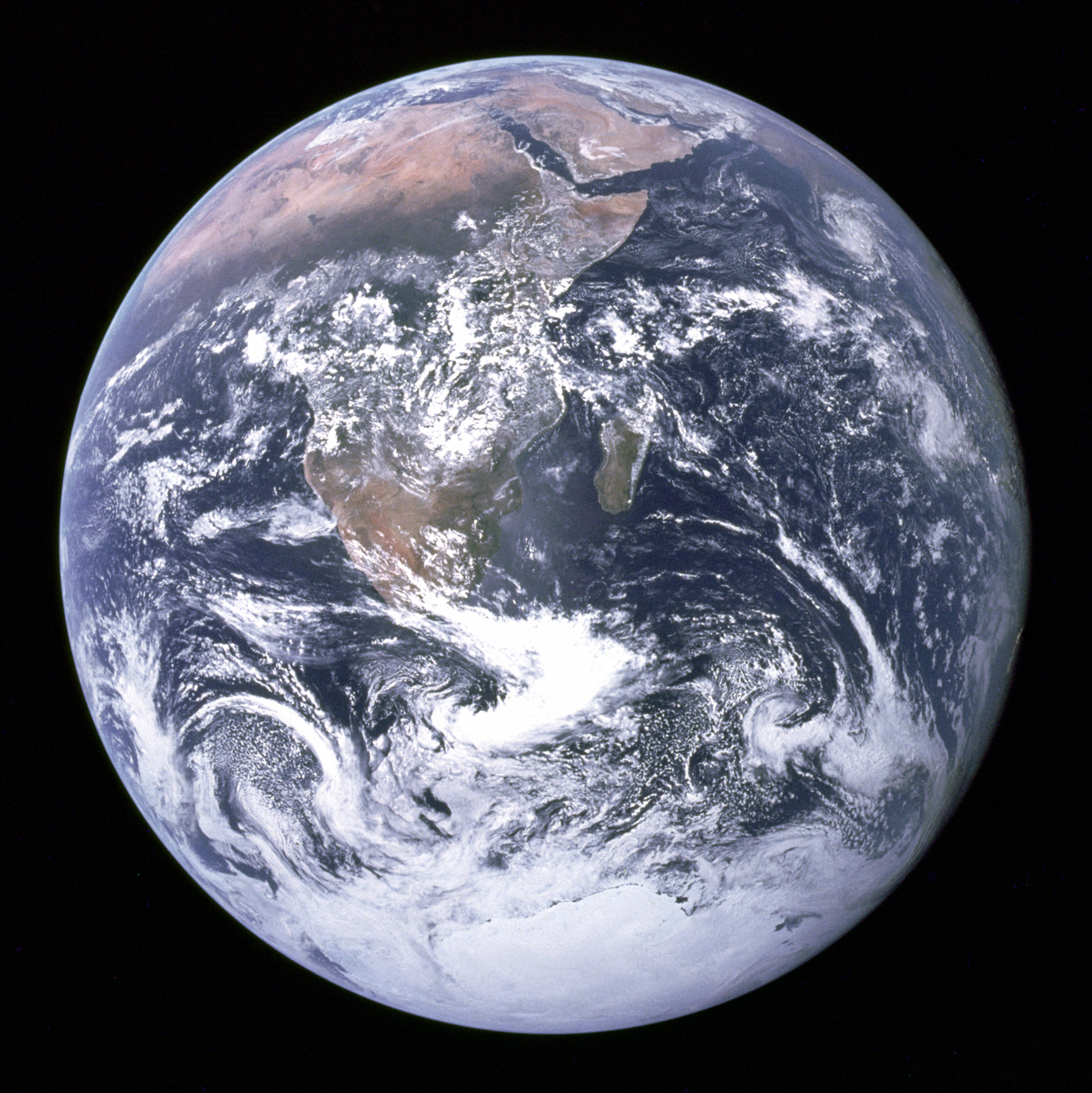

Sistine Chapel Ceiling

Shortly before I first moved to Cambridge, my girlfriend (now my wife) took me to the Sistine Chapel to show me the recently restored ceiling.

Figure: The ceiling of the Sistine Chapel.

When we got to Cambridge, we both attended Patrick Boyde’s talks on chapel. He focussed on both the structure of the chapel ceiling, describing the impression of height it was intended to give, as well as the significance and positioning of each of the panels and the meaning of the individual figures.

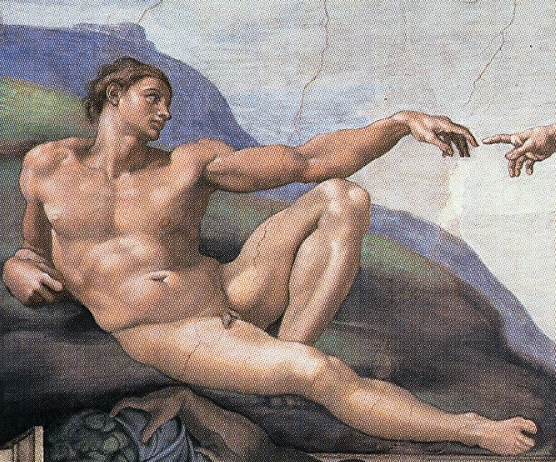

The Creation of Adam

Figure: Photo of Detail of Creation of Man from the Sistine chapel ceiling.

One of the most famous panels is central in the ceiling, it’s the creation of man. Here, God in the guise of a pink-robed bearded man reaches out to a languid Adam.

The representation of God in this form seems typical of the time, because elsewhere in the Vatican Museums there are similar representations.

Figure: Photo detail of God.

Photo from https://commons.wikimedia.org/wiki/File:Michelangelo,_Creation_of_Adam_04.jpg.

My colleague Beth Singler has written about how often this image of creation appears when we talk about AI (Singler, 2020).

See Lawrence (2024) Michelangelo, The Creation of Adam p. 7-9, 31, 91, 105–106, 121, 153, 206, 216, 350.

The way we represent this “other intelligence” in the figure of a Zeus-like bearded mind demonstrates our tendency to embody intelligences in forms that are familiar to us.

Lunette Rehoboam Abijah

Many of Blake’s works are inspired by engravings he saw of the Sistine chapel ceiling. The pose of Newton is taken from the Lunette depiction of Abijah, one of the Michelangeo’s ancestors of Christ.

Figure: Lunette containing Rehoboam and Abijah.

Elohim Creating Adam

Blake’s vision of the creation of man, known as Elohim Creating Adam, is a strong contrast to Michelangelo’s. The faces of both God and Adam show deep anguish. The image is closer to representations of Prometheus receiving his punishment for sharing his knowledge of fire than to the languid ecstasy we see in Michelangelo’s representation.

Figure: William Blake’s Elohim Creating Adam.

The caption in the Tate reads:

Elohim is a Hebrew name for God. This picture illustrates the Book of Genesis: ‘And the Lord God formed man of the dust of the ground.’ Adam is shown growing out of the earth, a piece of which Elohim holds in his left hand.

For Blake the God of the Old Testament was a false god. He believed the Fall of Man took place not in the Garden of Eden, but at the time of creation shown here, when man was dragged from the spiritual realm and made material.

From the Tate Britain

Blake’s vision is demonstrating the frustrations we experience when the (complex) real world doesn’t manifest in the way we’d hoped.

See Lawrence (2024) Blake, William Elohim Creating Adam p. 121, 217–18.

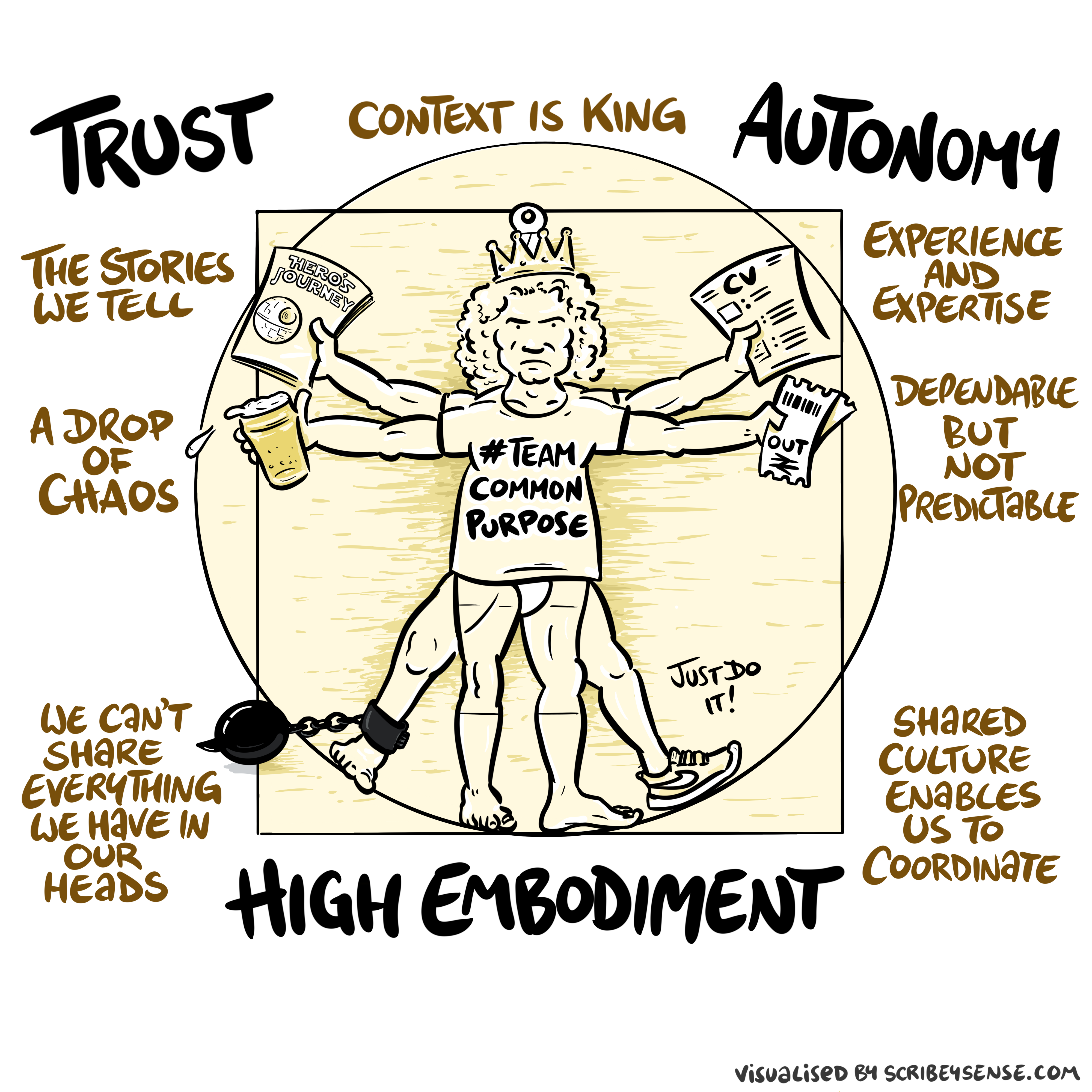

Trust, Autonomy and Embodiment

Trust, Autonomy and Embodiment

Figure: The relationships between trust, autonomy and embodiment are key to understanding how to properly deploy AI systems in a way that avoids digital autocracy. (Illustration by Dan Andrews inspired by Chapter 3 “Intent” of “The Atomic Human” Lawrence (2024))

This illustration was created by Dan Andrews after reading Chapter 3 “Intent” of “The Atomic Human” book. The chapter explores the concept of intent in AI systems and how trust, autonomy, and embodiment interact to shape our relationship with technology. Dan’s drawing captures these complex relationships and the balance needed for responsible AI deployment.

See blog post on Dan Andrews image from Chapter 3.

Trust is not a slogan; it is the infrastructure that allows autonomy to be devolved without losing control. Autonomy is always conditional: it depends on what information is available, what incentives shape behaviour, and whether escalation and accountability are real. In executive settings, the practical question is: where do we allow delegation (to people or machines), and where do we insist on human judgement and responsibility?

See Lawrence (2024) trust p. 43, 79, 100. See Lawrence (2024) embodiment factor p. 13, 29, 35, 79, 87, 105, 197, 216-217, 249, 269, 327, 353, 363, 369. See Lawrence (2024) topography, information p. 34-9, 43-8, 57, 62, 104, 115-16, 127, 140, 192, 196, 199, 291, 334, 354-5.

See blog post on Dan Andrews image from Chapter 3..

We communicate with each other through shared cultural reference points: stories, rituals, and artefacts. Great artworks are not just decoration: they are compressed cultural objects that can carry meaning across centuries.

The Sistine Chapel becomes a kind of public interface: a shared “model” that people can point at, interpret, dispute, and transmit. In that sense, the artwork itself is part of the communication system.

Figure: The creation of Adam and the Lunette of Abijah come to gether to influence Blake’s version of Newton, yet his view of creation is very different from Michelangelo’s.

What’s striking here is how influence works: a pose moves from a Michelangelo lunette into Blake’s Newton; a creation narrative is reinterpreted as anguish in Elohim Creating Adam. These are “links” in a human communication network.

Human communication is not only words passed between individuals. We also communicate through shared artefacts — images, stories, rituals, institutions — that act as a common reference frame. These artefacts stabilise meaning because they persist, they can be revisited, and they can be interpreted together.

This version of the “human culture interacting” diagram grounds the idea in a concrete chain: the Sistine Chapel as a shared cultural object; Michelangelo’s figures as a visual vocabulary; Blake’s Newton borrowing a pose from a lunette; and Blake’s Elohim Creating Adam reinterpreting the creation narrative. In other words: a human communication network made of artefacts.

Figure: Humans use culture, facts and artefacts to communicate.

A Six Word Novel

Figure: Consider the six-word novel, apocryphally credited to Ernest Hemingway, “For sale: baby shoes, never worn.” To understand what that means to a human, you need a great deal of additional context. Context that is not directly accessible to a machine that has not got both the evolved and contextual understanding of our own condition to realize both the implication of the advert and what that implication means emotionally to the previous owner.

See Lawrence (2024) baby shoes p. 368.

But this is a very different kind of intelligence than ours. A computer cannot understand the depth of the Ernest Hemingway’s apocryphal six-word novel: “For Sale, Baby Shoes, Never worn,” because it isn’t equipped with that ability to model the complexity of humanity that underlies that statement.

Figure: This is the drawing Dan was inspired to create for Chapter 5. An AI parrot repeats information about AI doom panicking humans.

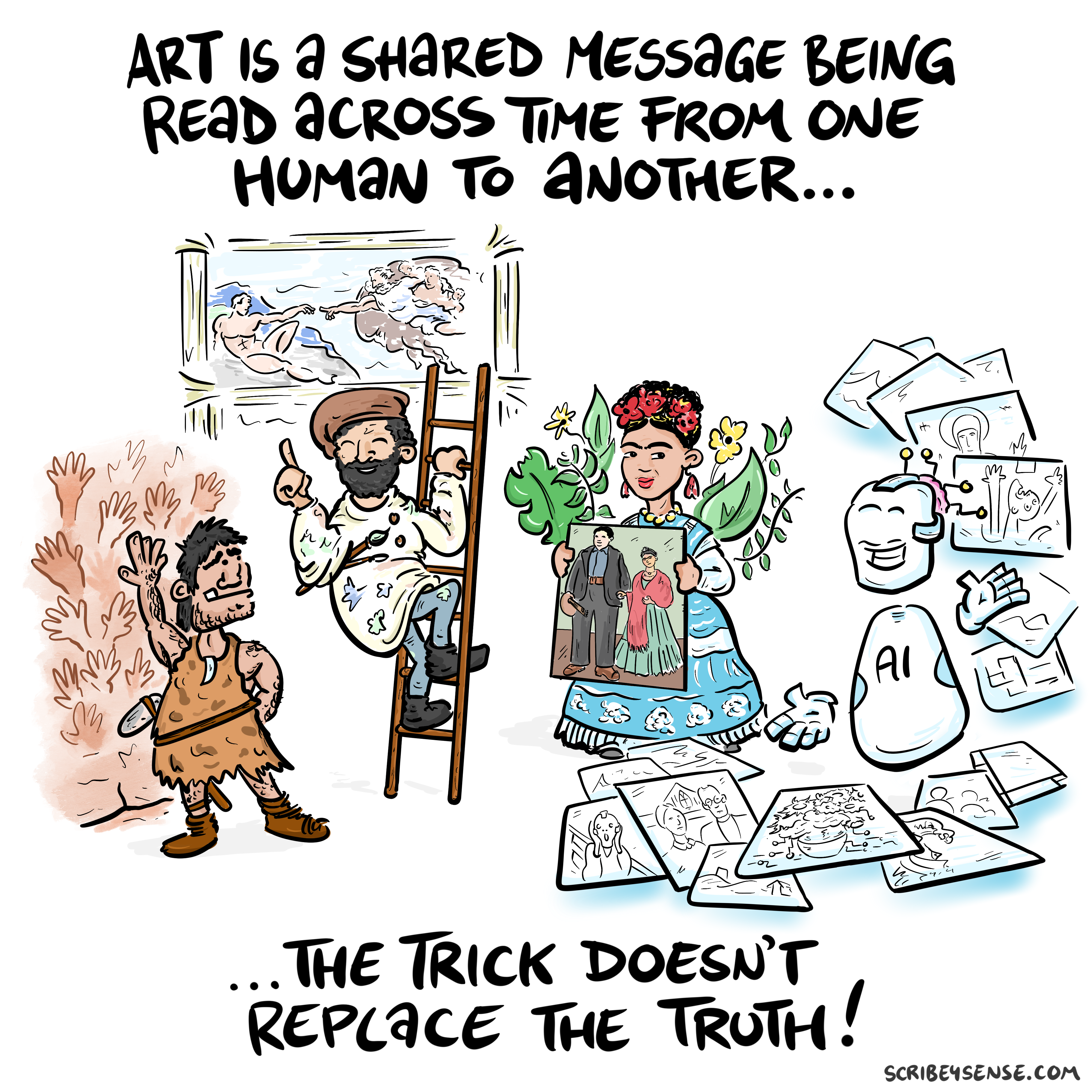

Figure: This is the drawing Dan was inspired to create for Chapter 4. It highlights how even if these machines can generate creative works the lack of origin in humans menas it is not the same as works of art that come to us through history.

See blog post on Art is Human..

For the Working Group for the Royal Society report on Machine Learning, back in 2016, the group worked with Ipsos MORI to engage in public dialogue around the technology. Ever since I’ve been struck about how much more sensible the conversations that emerge from public dialogue are than the breathless drivel that seems to emerge from supposedly more informed individuals.

There were a number of messages that emerged from those dialogues, and many of those messages were reinforced in two further public dialogues we organised in September.

However, there was one area we asked about in 2017, but we didn’t ask about in 2024. That was an area where the public unequivocal that they didn’t want the research community to pursue progress. Quoting from the report (my emphasis).

Art: Participants failed to see the purpose of machine learning-written poetry. For all the other case studies, participants recognised that a machine might be able to do a better job than a human. However, they did not think this would be the case when creating art, as doing so was considered to be a fundamentally human activity that machines could only mimic at best.

Public Views of Machine Learning, April, 2017

How right they were.

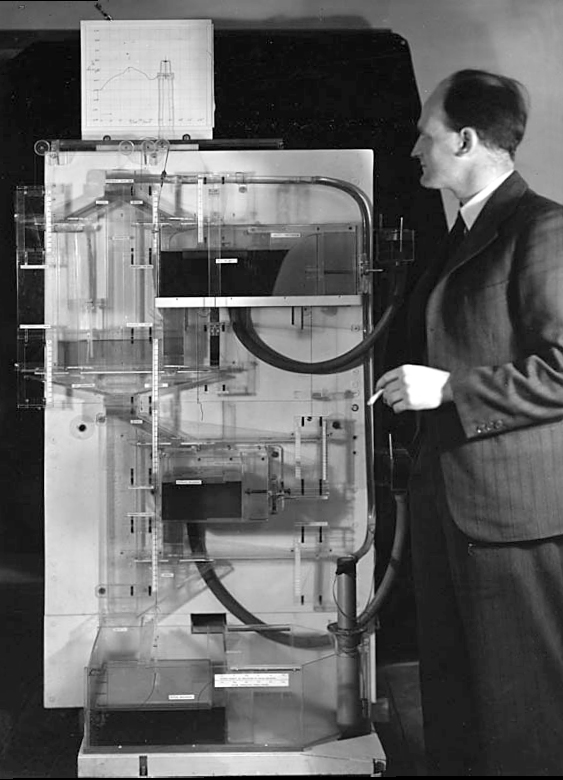

The MONIAC

The MONIAC was an analogue computer designed to simulate the UK economy. Analogue comptuers work through analogy, the analogy in the MONIAC is that both money and water flow. The MONIAC exploits this through a system of tanks, pipes, valves and floats that represent the flow of money through the UK economy. Water flowed from the treasury tank at the top of the model to other tanks representing government spending, such as health and education. The machine was initially designed for teaching support but was also found to be a useful economic simulator. Several were built and today you can see the original at Leeds Business School, there is also one in the London Science Museum and one in the Unisversity of Cambridge’s economics faculty.

Figure: Bill Phillips and his MONIAC (completed in 1949). The machine is an analogue computer designed to simulate the workings of the UK economy.

See Lawrence (2024) MONIAC p. 232-233, 266, 343.

Human Analogue Machine

Recent breakthroughs in generative models, particularly large language models, have enabled machines that, for the first time, can converse plausibly with other humans.

The Apollo guidance computer provided Armstrong with an analogy when he landed it on the Moon. He controlled it through a stick which provided him with an analogy. The analogy is based in the experience that Amelia Earhart had when she flew her plane. Armstrong’s control exploited his experience as a test pilot flying planes that had control columns which were directly connected to their control surfaces.

Figure: The human analogue machine is the new interface that large language models have enabled the human to present. It has the capabilities of the computer in terms of communication, but it appears to present a “human face” to the user in terms of its ability to communicate on our terms. (Image quite obviously not drawn by generative AI!)

The generative systems we have produced do not provide us with the “AI” of science fiction. Because their intelligence is based on emulating human knowledge. Through being forced to reproduce our literature and our art they have developed aspects which are analogous to the cultural proxy truths we use to describe our world.

These machines are to humans what the MONIAC was the British economy. Not a replacement, but an analogue computer that captures some aspects of humanity while providing advantages of high bandwidth of the machine.

See Lawrence (2024) ignorance: HAMs p. 347. See Lawrence (2024) test pilot p. 163-8, 189, 190, 192-3, 196, 197, 200, 211, 245.

HAM

The Human-Analogue Machine or HAM therefore provides a route through which we could better understand our world through improving the way we interact with machines.

Figure: The trinity of human, data, and computer, and highlights the modern phenomenon. The communication channel between computer and data now has an extremely high bandwidth. The channel between human and computer and the channel between data and human is narrow. New direction of information flow, information is reaching us mediated by the computer. The focus on classical statistics reflected the importance of the direct communication between human and data. The modern challenges of data science emerge when that relationship is being mediated by the machine.

The HAM can provide an interface between the digital computer and the human allowing humans to work closely with computers regardless of their understandin gf the more technical parts of software engineering.

Figure: The HAM now sits between us and the traditional digital computer.

Of course this route provides new routes for manipulation, new ways in which the machine can undermine our autonomy or exploit our cognitive foibles. The major challenge we face is steering between these worlds where we gain the advantage of the computer’s bandwidth without undermining our culture and individual autonomy.

See Lawrence (2024) human-analogue machine (HAMs) p. 343-347, 359-359, 365-368.

Bandwidth vs Complexity

The computer communicates in Gigabits per second, One way of imagining just how much slower we are than the machine is to look for something that communicates in nanobits per second.

|

|||

| bits/min | \(100 \times 10^{-9}\) | \(2,000\) | \(600 \times 10^9\) |

Figure: When we look at communication rates based on the information passing from one human to another across generations through their genetics, we see that computers watching us communicate is roughly equivalent to us watching organisms evolve. Estimates of germline mutation rates taken from Scally (2016).

Figure: Bandwidth vs Complexity.

The challenge we face is that while speed is on the side of the machine, complexity is on the side of our ecology. Many of the risks we face are associated with the way our speed undermines our ecology and the machines speed undermines our human culture.

See Lawrence (2024) Human evolution rates p. 98-99. See Lawrence (2024) Psychological representation of Ecologies p. 323-327.

Figure: This is the drawing Dan was inspired to create for Chapter 12. It captures the challenge the analogy where the speed of information assimilation associated with machines is related to the speed assimilation associated with humans.

See blog post on the launch of Facebook’s AI lab..

Figure: This is the drawing Dan was inspired to create for Chapter 8. It captures the notion of Syztem Zero. The idea of System Zero is that the machine’s access to information is so much greater than ours that it can manipulate us in ways that exploit our cognitive foibles, interacting directly with our reflexive selves and undermining our reflective selves.

See blog post on A Retrospective on System Zero..

System Zero is when the machine’s access to our information is so much greater than ours it understands us in ways that we don’t understand each other.

Technical and Intellectual Debt

Technical debt is famous in software engineering. It’s where you deploy quickly and as a result you deploy a system that doesn’t have the robustness of engineering it needs for ongoing use. You therefore struggle to maintain your deployment.

Intellectual debt is when you struggle to explain what your system is doing. It may be working from an engineering perspective, but as you combined more and more components in your software system you struggle to explain how they’re interacting.

Agentic Debt

Agentic AI could pay down the technical debt and intellectual debt that plauges our deployment of complex systems. But in doing so it could create a new form of debt: agentic debt.

Agentic debt is the “new debt” introduced by systems that can act: the accrued risk and cost of operating delegated workflows without crisp boundaries. Unlike technical debt, e.g. emerging from engineering shortcuts, and intellectual debt emerging from (well engineered) complex systems, agentic debt is about unsafe or illegible delegation. Who (or what) can cause what action, on what evidence, with what recovery path?

Figure: This is the drawing Dan was inspired to create for Chapter 11. It captures the core idea in our tendency to devolve complex decisions to what we perceive as greater authorities, but what are in reality ill equipped to deliver a human response.

See blog post on Playing in People’s Backyards..

In the past when decisions became too difficult, we invoked higher powers in the forms of gods, and “trial by ordeal.” Today we face a similar challenge with AI. When a decision becomes difficult there is a danger that we hand it to the machine, but it is precisely these difficult decisions that need to contain a human element.

Wicked Problems

Figure: Society faces many wicked problems in health, education, security, and social care that require carefully deploying AI toward meaningful societal challenges rather than focusing on commercially appealing applications. (Illustration by Dan Andrews inspired by the Epilogue of “The Atomic Human” Lawrence (2024))

This illustration was created by Dan Andrews after reading the Epilogue of “The Atomic Human” book. The Epilogue discusses how we might deploy AI to address society’s most pressing challenges, and Dan’s drawing captures the various wicked problems we face and some of the initiatives that are looking to address them.

See blog post on Who is Stepping Up?.

Thanks!

For more information on these subjects and more you might want to check the following resources.

- company: Trent AI

- book: The Atomic Human

- twitter: @lawrennd

- podcast: The Talking Machines

- newspaper: Guardian Profile Page

- blog: http://inverseprobability.com